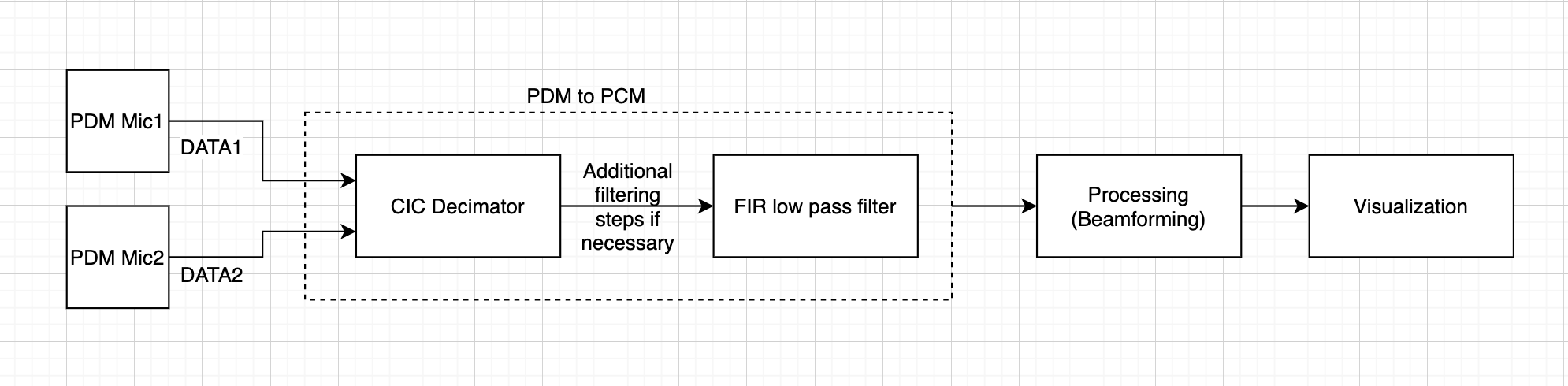

This week, I worked with Ryan to build delay and sum beamforming without the use of external libraries provided from MATLAB.

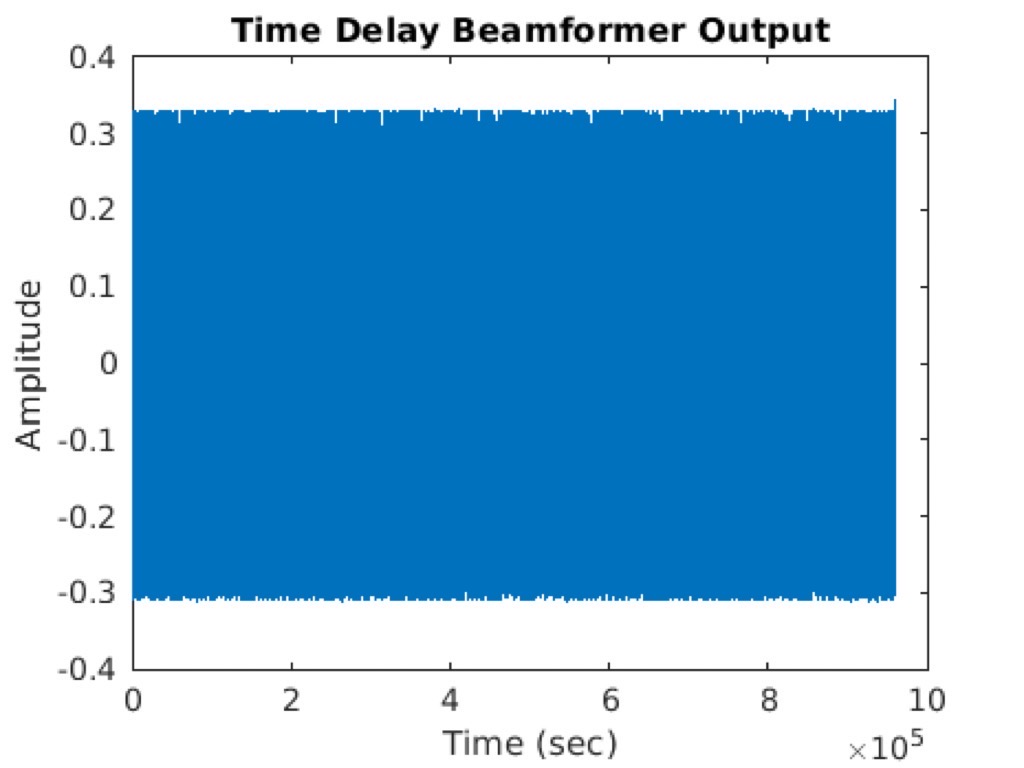

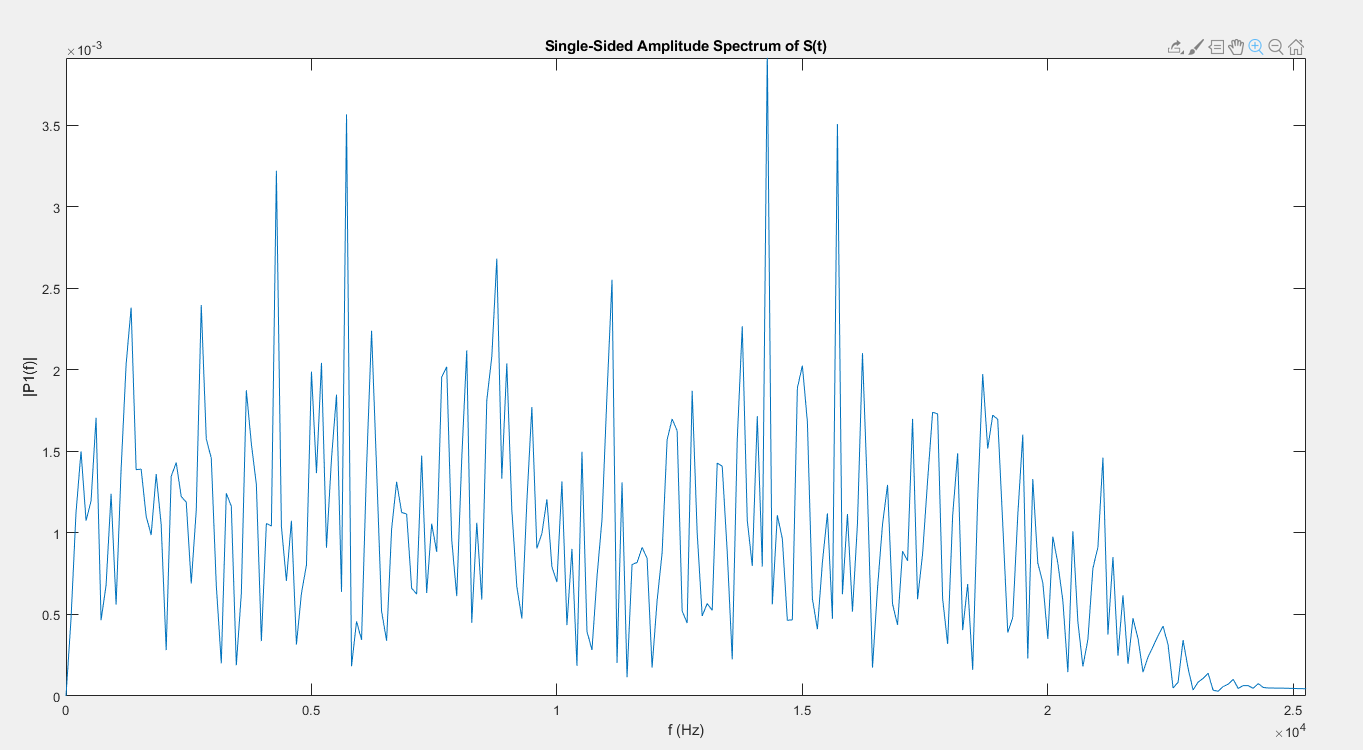

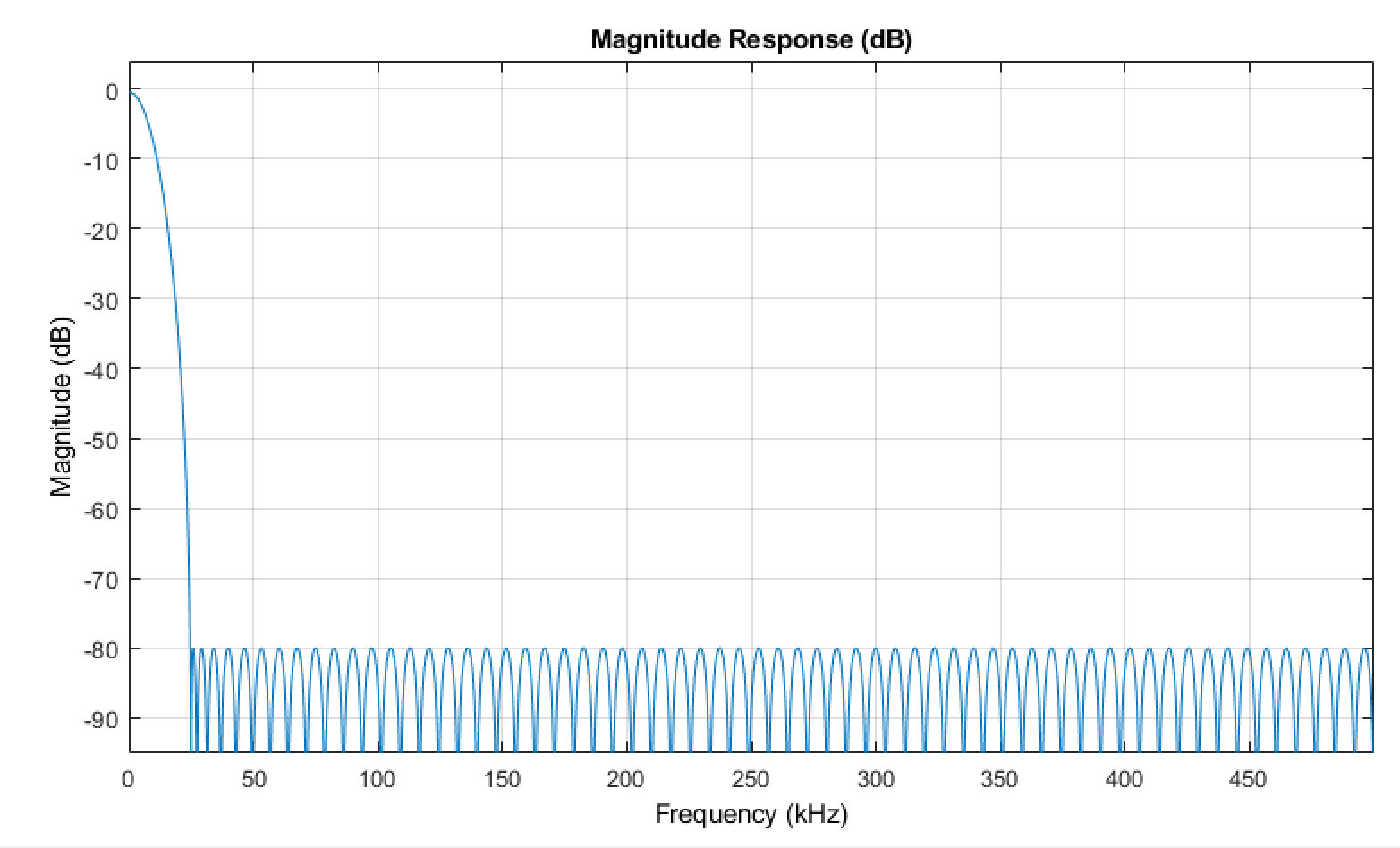

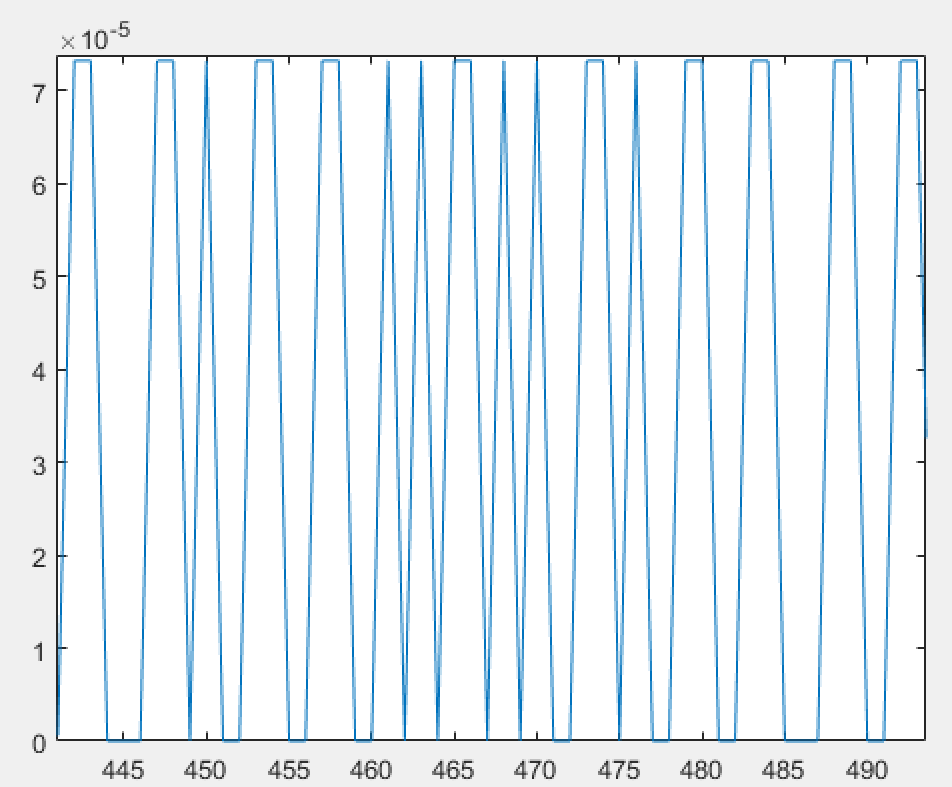

This beamforming allows amplifying signals from a specific direction while suppressing other signals. This conventional method of beamforming provides ease of implementation. The method has been used widely for processing arrays. A disadvantage would be in low resolution in closely spaced targets. However, as long as we are able to detect the source of the leak for our project, this is acceptable. Several steps were taken to achieve the output. First off, signals captured in microphones have similar looking waveforms but include delays. I calculated the delay with the fact that we know the angle, distance between the microphones, and distance from the sound source. By simple geometry and algebra with polar coordinates, I was able to get x,y and z for distance and divided by the speed of sound to get the delay. Two other delay calculation methods were also taken but, the results were better shown for the current method. Then, the signal of each microphone was shifted by an appropriate delay amount. The shifted microphones were then summed up and normalized by the number of microphone channels.

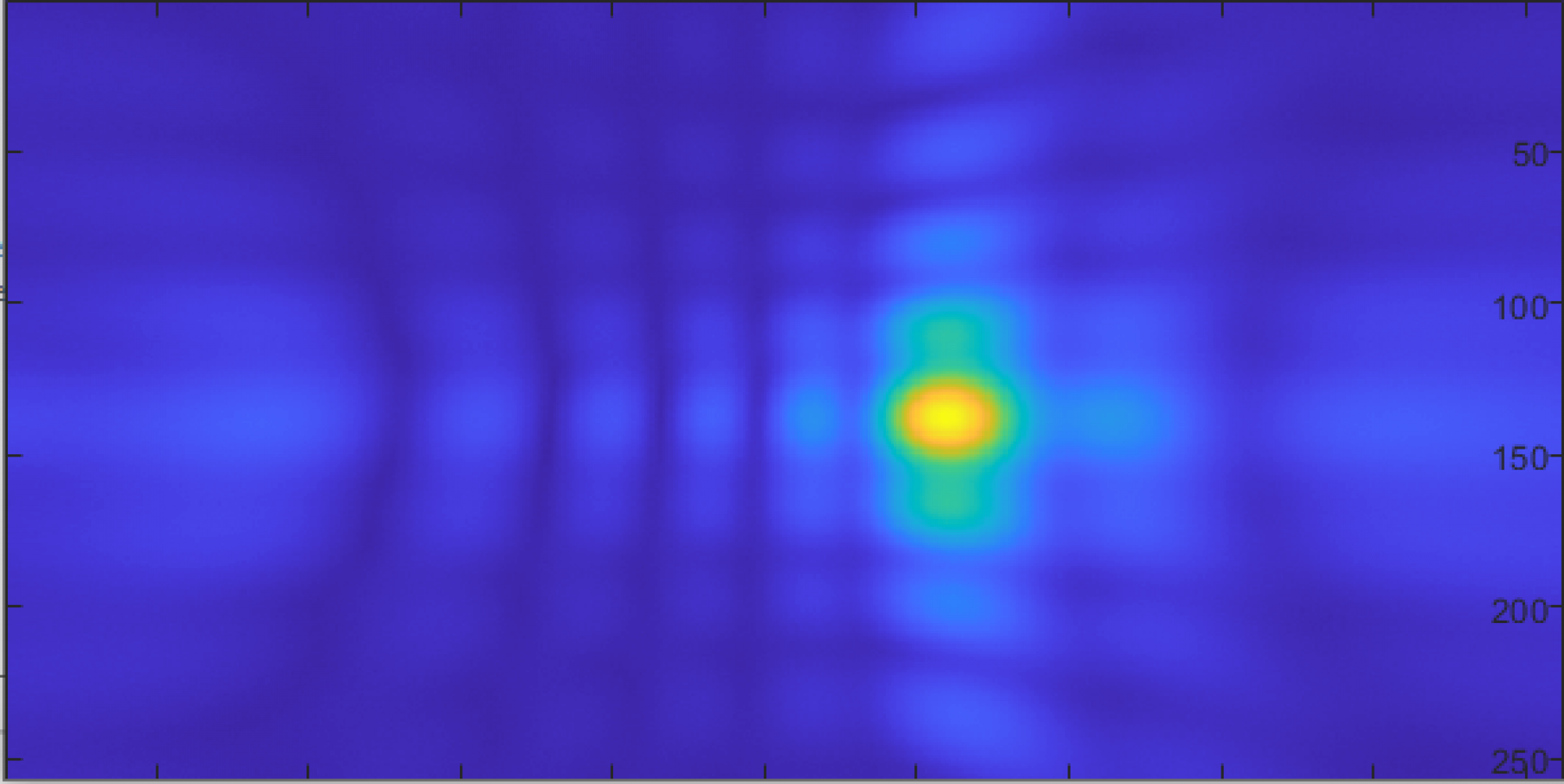

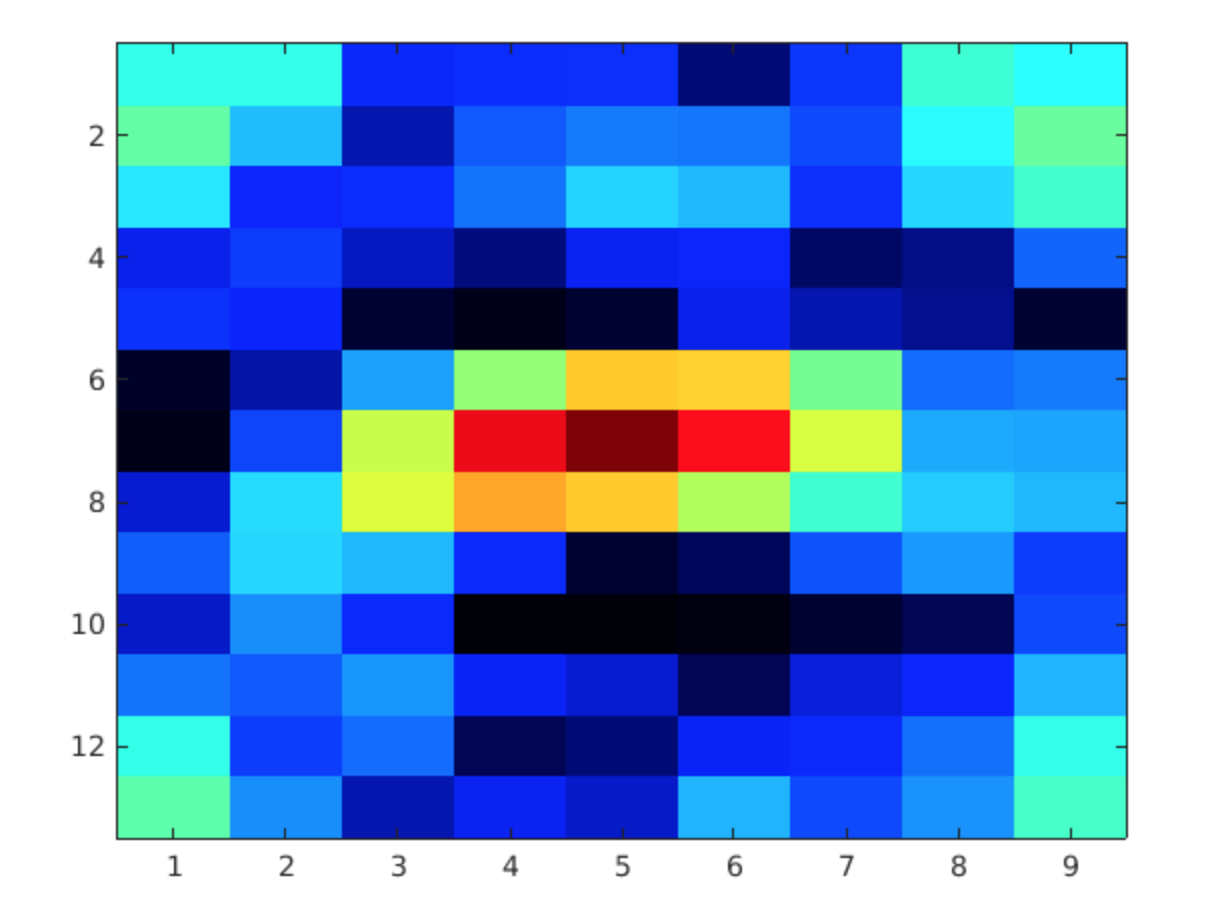

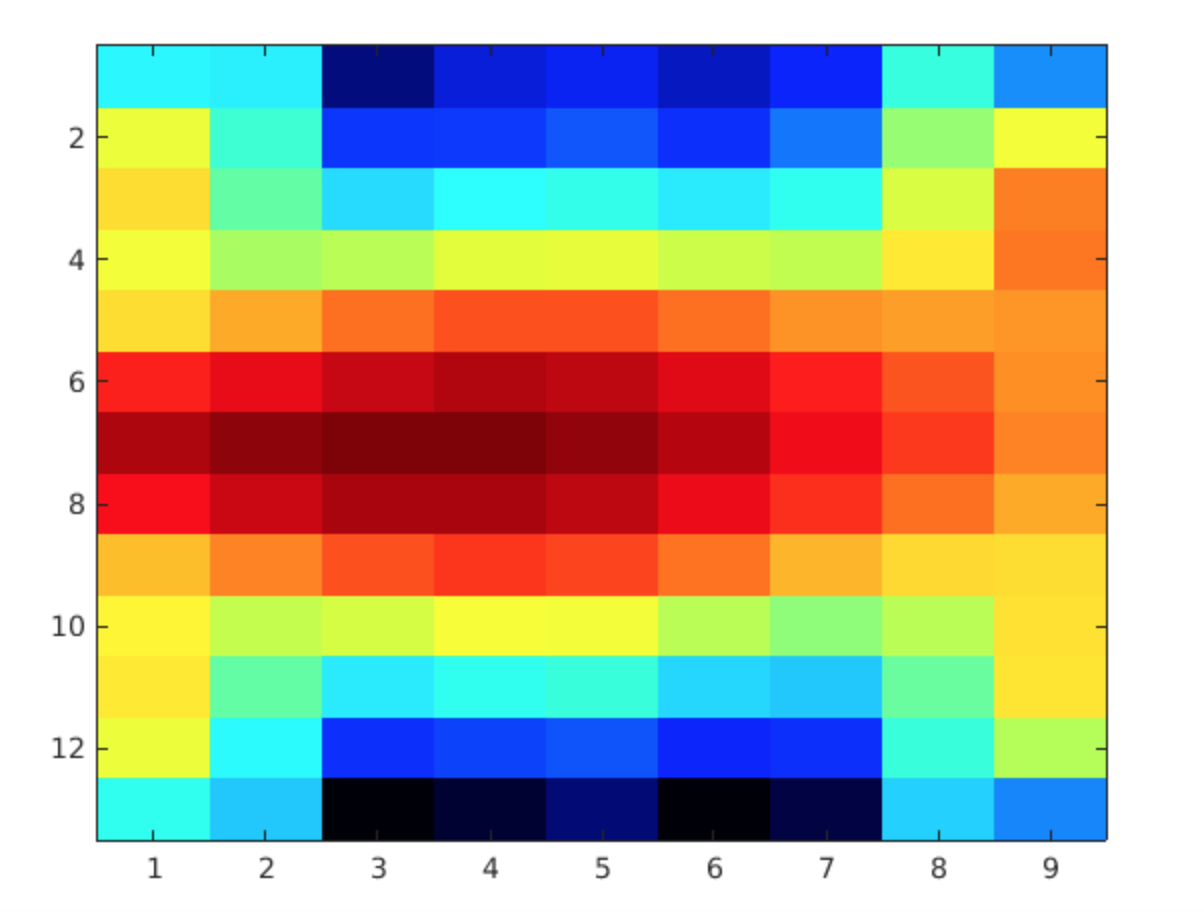

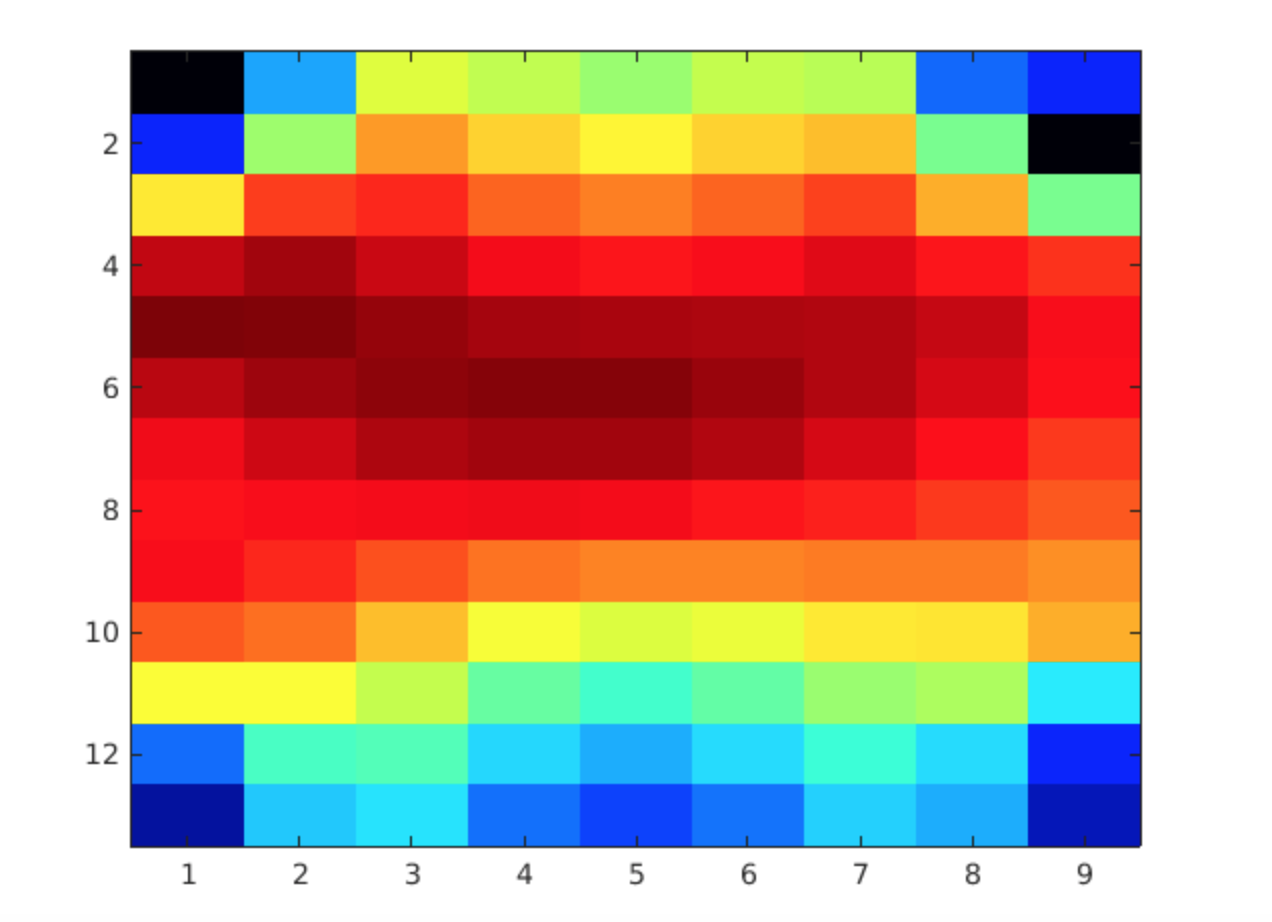

Visualization of the beamforming output was simply done through a heatmap. Sound source regions were shown in brighter colors. The resolution ended up to be low, but an approximate location can still be visible through the heat map.

2k Gaussian (source from center)

Synthesized Leak (source slightly off center to the left)

Real Leak (source slightly off center to the left)

Next week, I will work on the final report with my teammates!