https://drive.google.com/file/d/16BUmcdUff1Xxrdo6RqtjcvHanefeLMUc/view?usp=sharing

(PDF exceeded the maximum upload size).

ECE Capstone Project, Spring 2022: Jai Madisetty, Keshav Sangam, Raymond Xiao

https://drive.google.com/file/d/16BUmcdUff1Xxrdo6RqtjcvHanefeLMUc/view?usp=sharing

(PDF exceeded the maximum upload size).

This week, I worked on setting up the testing environment. Due to size constraints in HH and lack of materials, we decided to use a 10×16 ft^2 environment. We modified the design a bit due to the large radius of the robot and its difficulty to maneuver the narrow obstacle course. Additionally, I finished up with the DWA path planning algorithm, modifying some of the logic in the frontier search portion of our code. Right now, all that is left is integration and tuning our overall system. Due to our robot’s autonomous nature, it is necessary to test our system with headlessly, which is quite difficult due to network latency. Our SLAM and path planning algorithm aren’t exactly synchronous in real time. As a result, we plan on slowing down our robot’s movement so it will be easier to test.

Throughout the week, we finished programming our DWA path planning algorithm. There are some struggles still left, however. Although our path planning works correctly in simulation, there are integration challenges when moving from simulation to reality. In particular, the SLAM algorithm matches the lidar point clouds very poorly during sudden large accelerations and fast speeds. Unfortunately, autonomously moving involves large accelerations in both the translational and rotational senses. This is not much of a problem in simulation since there were lots of CPU resources available since the SLAM is not running in real time. Moving SLAM to real time severely limits the computational resources for the rest of our algorithms (including path planning and our AruCo detection). The translational acceleration is less of a problem, since the SLAM algorithm can keep up with it through a decent range of speeds. Tomorrow, we are focusing on fine tuning the maximal rotational velocity and acceleration curves to ensure the map we generate of the environment is as accurate as possible. In essence, our work leading up to the demo and final report primarily revolves around integration and optimization, which we can do in parallel with our subsystem verification and system validation.

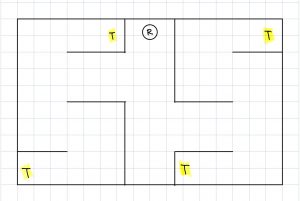

This week, our team focused on integrating the physical components of our system as well as the software stacks . We successfully installed the opencv-contrib library (which is needed for ArUco markers). We also integrated the DWA path planning algorithm into the rest of our system as well. In addition, we set up the environment we will be using for testing as well as for demoing. A picture of the updated diagram as well as a picture of the real life setup are shown below. The markers have not yet been added but there will be 5 of them throughout this ‘room’. Overall, we are close to finishing and integration and testing are the only ‘real’ tasks left.

This week, I worked with Jai on setting up the testing and verification environment. Our current environment is a bit different from the one shown in the presentation. Originally, the size was 16×16 feet but due to size constraints, we changed it to 10×16 feet. In addition, we modified the layout of the ‘rooms’ and added hallways to make it more challenging to navigate. We also increased the number of ArUco markers in the environment from 3 to 5. Pictures of the setup as well as the updated diagram will be included in the team status report.

I also worked on integration of the ArUco marker detection code into our development environment. There were a lot dependency issues that neeeded to be resolved for the modules to work. Overall, I think we a bit behind but we are close to reaching the final solution. For this upcoming week before the demo, I plan to help my teammates with integrating the rest of the system and testing and debugging.

This week, I worked mainly on getting the path planning software coded up. However, due to a problem with getting a SLAM cartographer integrated with ROS, I was not able to take into consideration inputs from the SLAM subsystem. Thus, I also helped Keshav with the SLAM integration. We did eventually get the cartographer working; however, the localization of the robot within the created map is very inaccurate. We do plan on using sensor readings from the robot (odometer and possibly infrared sensors) to improve the localization. After we improve the localization, I plan on utilizing SLAM inputs (frontier of open areas in map) in the path planning for robustness.

The bulk of this week was devoted towards integration, mainly with SLAM. We were facing many linker files errors when trying to integrate the Google Cartographer system with our system in ROS. Fortunately, we did end up getting this working, but it did end up eating into a lot of our time this week. Overall, we have individual sub-systems working (detecting ArUco markers and path planning); however, we have not yet integrated these into our system due to the bottleneck of integrating the Google Cartographer system with ROS. Thus, it is difficult to say when we can start overall system tests. There may very well be a lot of tweaking to do with the software after the integration of the many parts. We are planning to come into lab tomorrow to work on integration.

This week, I worked on CV. I have some preliminary code that correctly identifies whether an ArUco marker is in the frame (no frame or multiple frames). I am currently working on integrating this into our current system. The plan is to publish this data to a ROS topic so that the Jetson can use it for path planning/SLAM.

I also helped with setting up and integrating more of the system this week. The SLAM node has been installed and seems to be working correctly. The obstacle mapping seems to be accurate when we tested it but the localization is not turned well.

For this upcoming week, I plan to finish the CV ROS integration and help the rest of my team with setting up our testing environment.

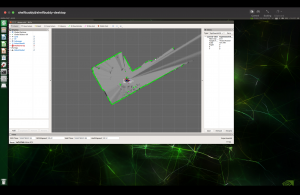

Below is an image of the SLAM visualization as well as the current location the robot is in. As you can see, the data is pretty accurate; the walls and hallway are clearly defined.

EDIT: I forgot to upload a picture of the SLAM visualization. It is below:

This week, we focused on integration. As of right now, SLAM is being integrated with path planning, but there are some critical problems we are facing. While the map that our SLAM algorithm builds is accurate, the localization accuracy is extremely poor. This makes it impossible to actually path plan. The poor localization accuracy stems from the fact that when we simulate our lidar/odometry data to test our SLAM algorithm, there is too little noise in the data to show problems with bad performance; thus, it is difficult to tune the algorithm without directly running it on the robot. Manually introducing noise in the simulated data did not help improve the tuning parameters. To solve this problem, we are going to record a ROS bag (a collection of all messages being published across the ROS topics) so that we don’t have to generate simulated data, instead taking sensor readings directly from the robot. By recording this data into a bag, we can replay the data and tune the SLAM algorithm from this. I believe we are still on track since we have a lot of time to optimize these parameters. We also worked on the final presentation slides, and started writing the final report.