This prior week I was getting myself acquainted with opencv and its libraries. I successfully made a function that captures webcam data both in a continuous stream and when a function is invoked. I successfully applied the cammy edge filter (for thickness detection) on a captured image and increased its threshold. (proof below) This is necessary for the computer vision part of the project because this will be the primary way to detect meat thickness for the cooking time estimation.

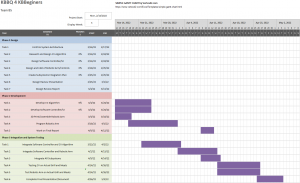

I am actually currently on schedule. Figuring out features of opencv and trying out some of the tools is important before starting on the real work of creating tools for the project. Furthermore, finding limitations of some computer vision methods is important before the design review.

Next week’s deliverables: some rudimentary form of blob detection. Uses opencv to capture and process an image. This is necessary because the action that kick starts the cooking process is a user placing meat in front of a camera, and this requires blob detection to see if an object exists or not.