This week my efforts were focused on research and slide preparation. On the research end, I looked into audio filtering methods, aws setup, and methods of testing. I looked into the ReSpeaker, PyAudio, Audacity, and SciPi libraries for methods of audio processing and to see what we could leverage. I also looked for research papers for processing the audio, microphone feedback, and user design. I also looked into methods of testing like stress testing to make our system more robust.

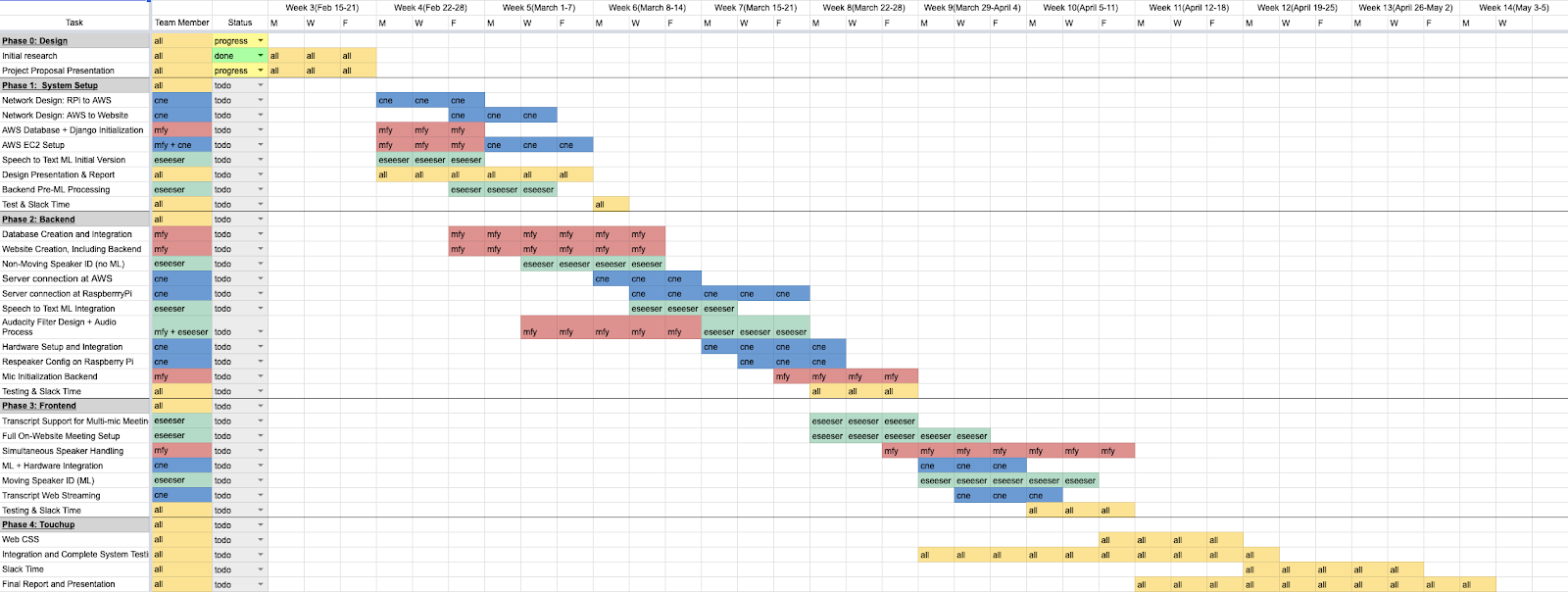

I believe that I am currently on schedule. Our project proposal slides are complete and our initial gantt chart has been created.

From our gantt chart, I will be starting to setup the AWS server and website next week as well as starting to prepare the design presentation.