https://www.youtube.com/watch?v=3HcLRrBb7zM

Carnegie Mellon ECE Capstone, Spring 2022; Tom Scherlis, Sam Zeloof, Graham MacFarquhar

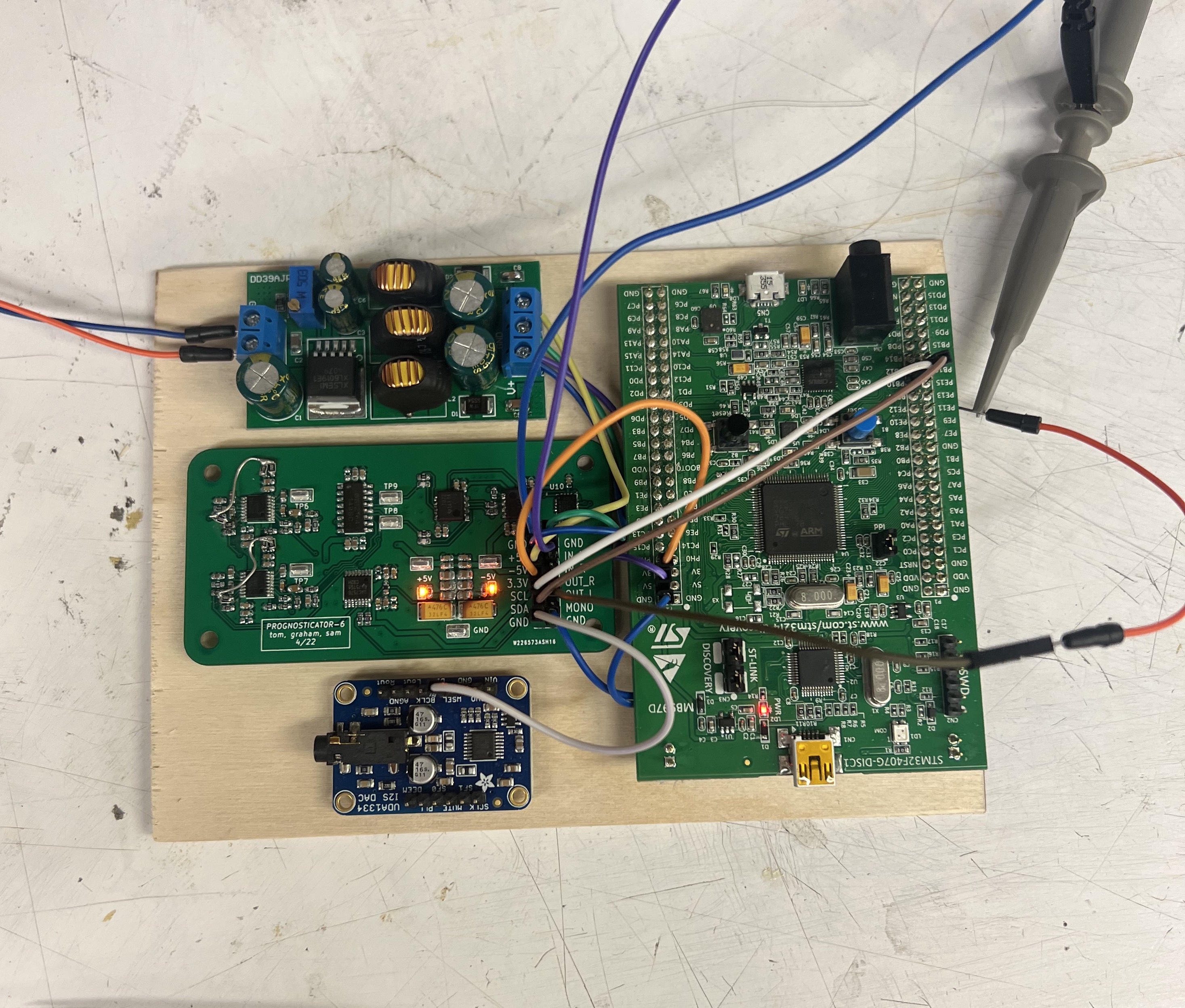

This week I made significant progress on the electronics. The filter board has been completely tested and is ready for integration into the synth. I built a testbench using an STM32 and I2S DAC to drive signals into the analog board for testing purposes:

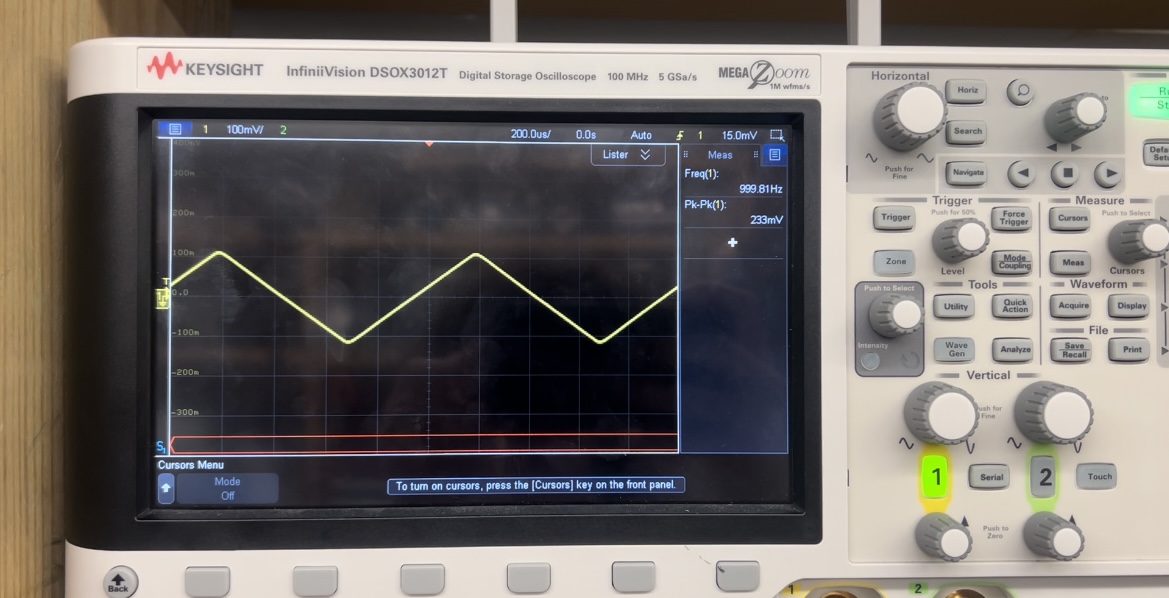

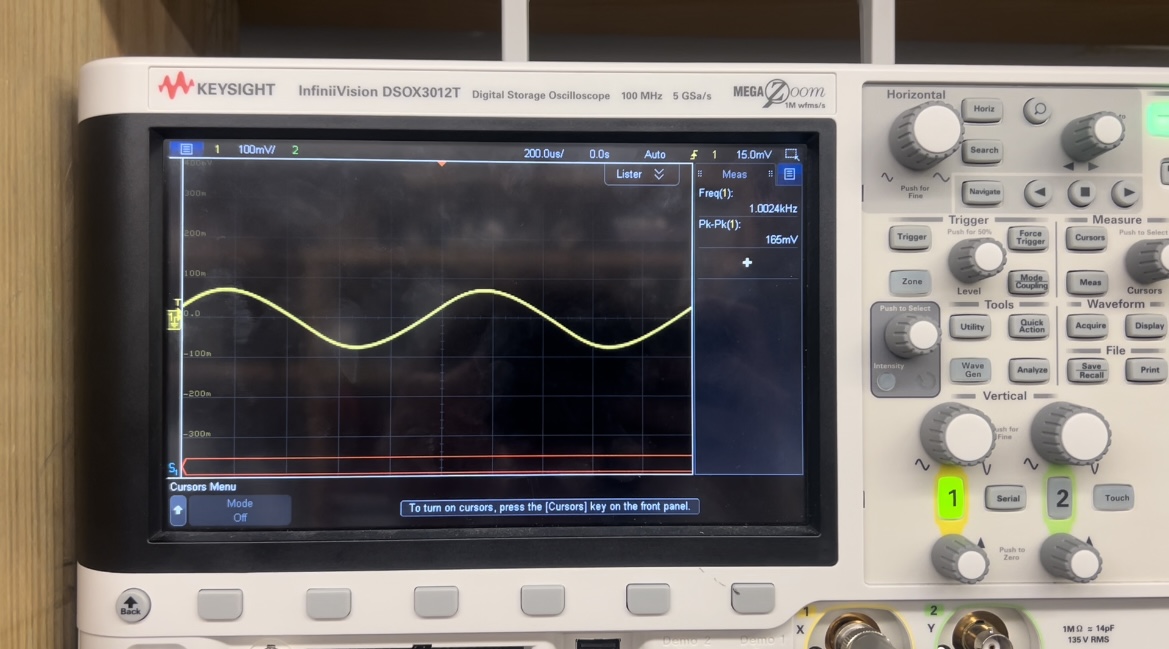

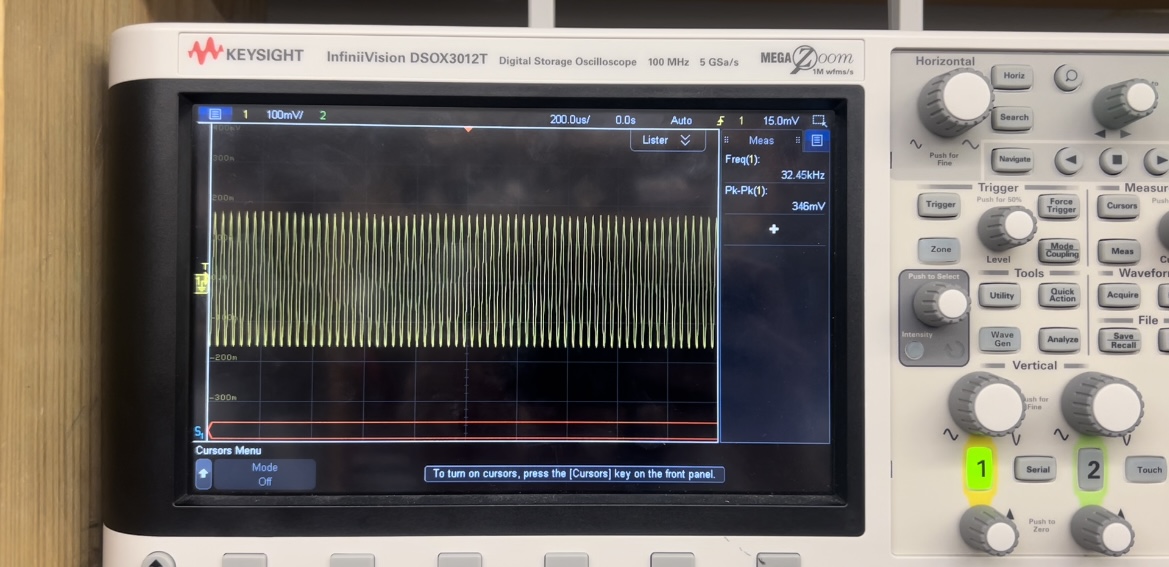

I was able to measure noise, channel matching, filter cutoff performance, and total harmonic distortion.

Here’s a test with triangle wave input. The first image is input, second is low pass filtered (higher harmonics removed), and third is with 60dB resonance (higher harmonics and resonance added back.)

In the final week, I will integrate this with the rest of the synth and connect it to our final microcontroller choice (Teensy 4.1). The main deliverable this week is oscillator code written in C to drive this board and also verify functionality after it’s completely integrated. We are still about a week behind in schedule, but given that we have no classes this week will still be able to get everything done.

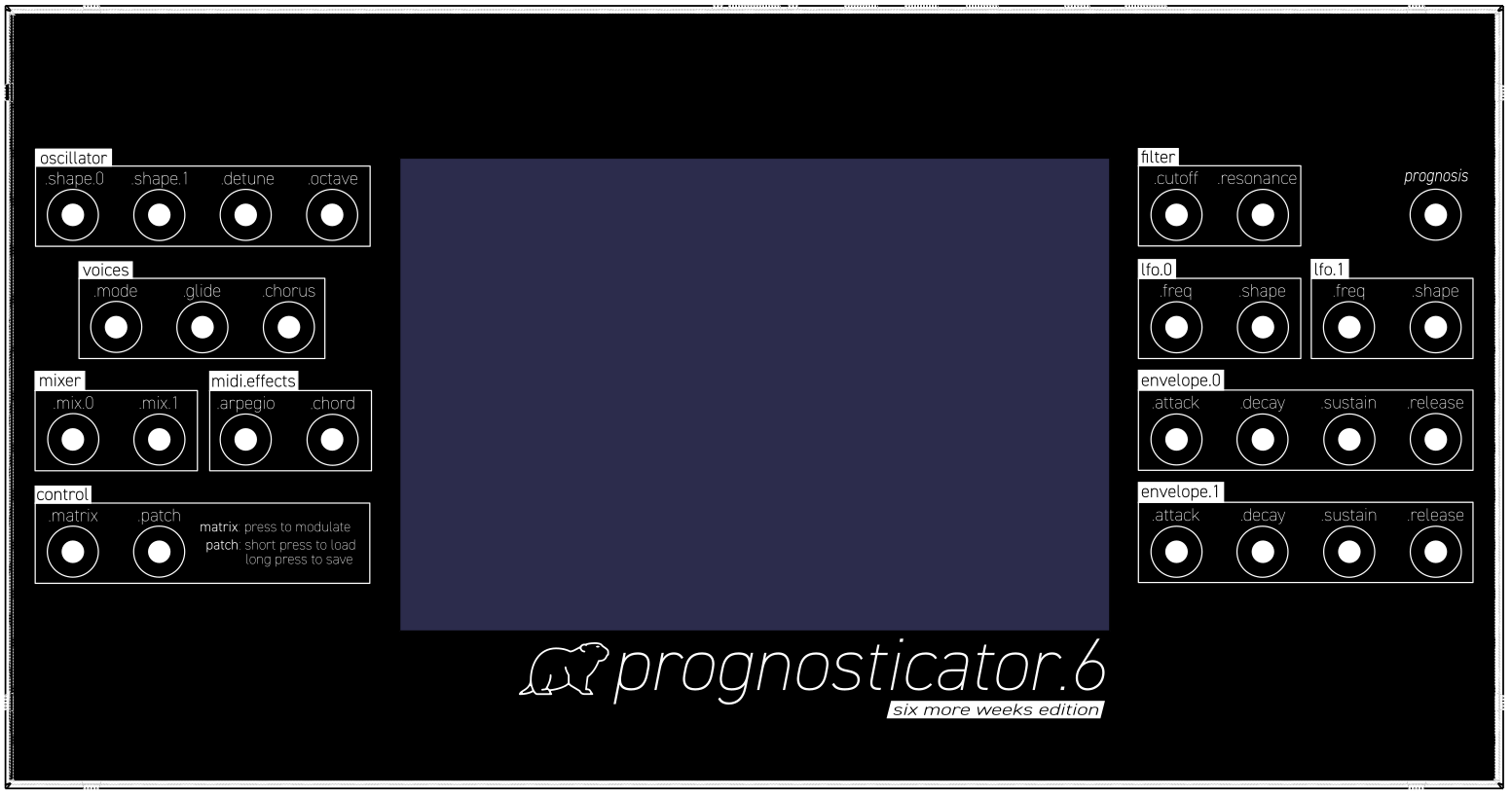

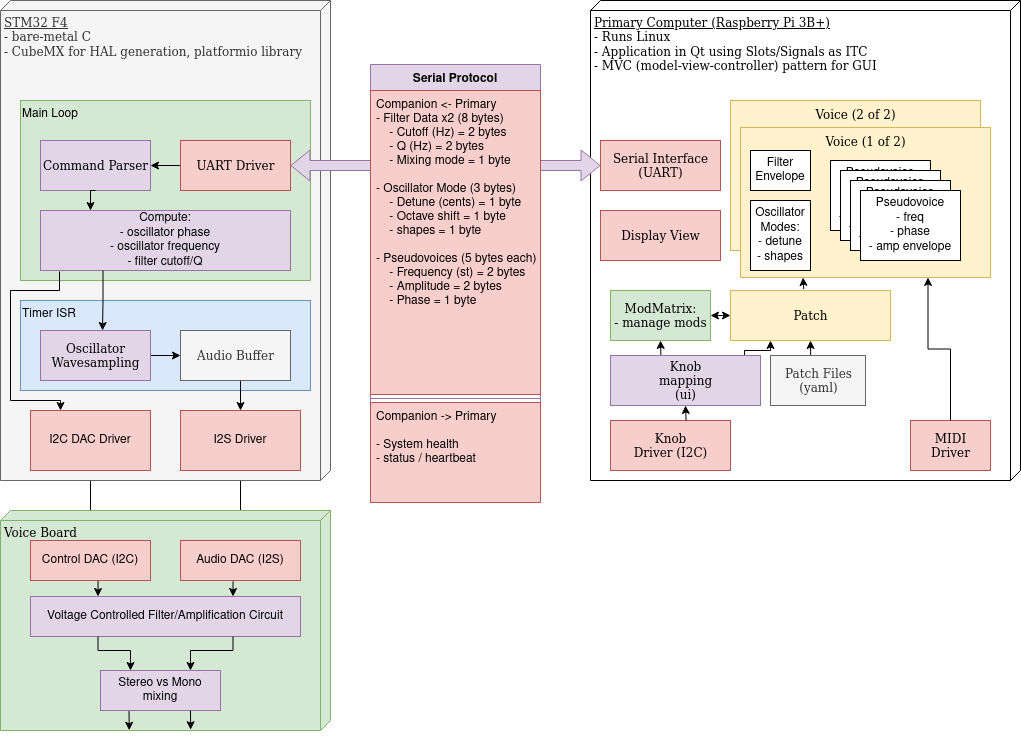

This week I finalized the user interface design for the front panel and began software implementation, including finalizing the software architecture for the primary computer (raspberry pi), co-processor (stm32), and the interaction protocol.

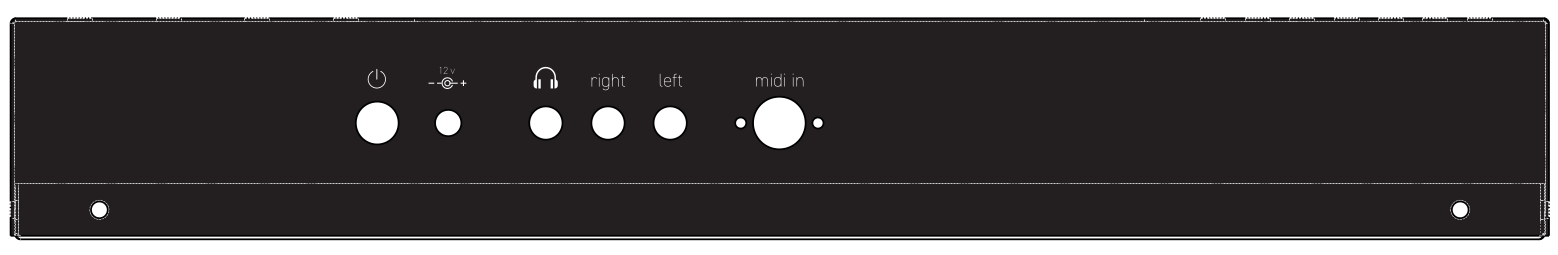

Detailed PDF of the enclosure design: panel_sheetmetal_engraved_textpoly

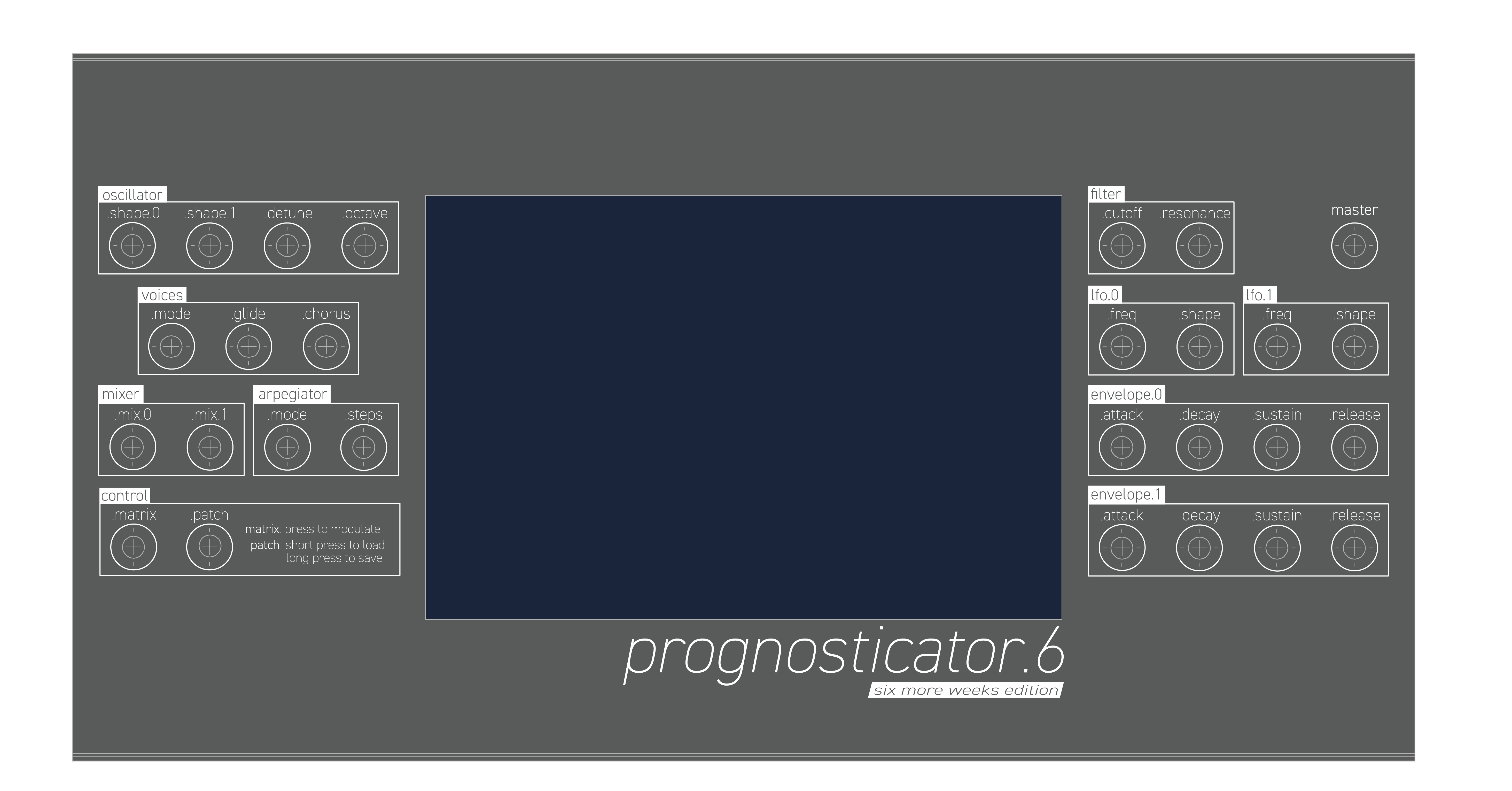

The design was focused on simplicity and consistency, with an emphasis on sharp corners and circles. The design is intended to be clean and simple, relying on simple shapes that mirror the mechanical design of the enclosure and merge seamlessly with the geometric designs of the actual GUI application.

After production, the cases look like this. They’re black anodized aluminum, so the pattern is just laser etched onto them.

Software architecture is done, and I’m working to implement the system in C++/Qt currently. My goal for this week is to produce audio and finish the core software (everything except the GUI itself).

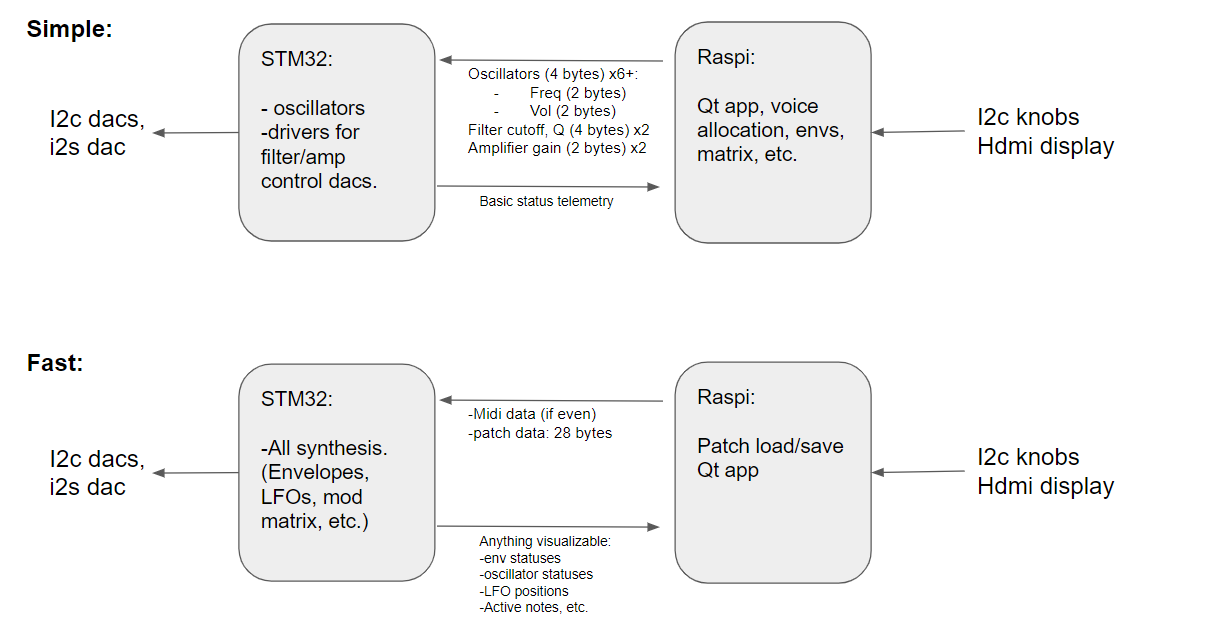

Software architecture:

A significant amount of work needs to happen in the GUI space to finish this. I’ve been working on the voice/pseudovoice/patch architecture, which is the core of the sound synthesis. Voices control voice hardware, including dedicated VCF/VCA circuits (voltage controlled filter / amplifiers.) Pseudovoices are oscillator-pairs with amplifier envelopes, which allow multiple notes to be allocated to the same hardware voice, although they must share VCF/VCA parameters. The voicing modes discussed in the design report discuss how this process works.

This week, I want to finish all of the Primary Computer software except for the GUI software, and put together the synthesizer.

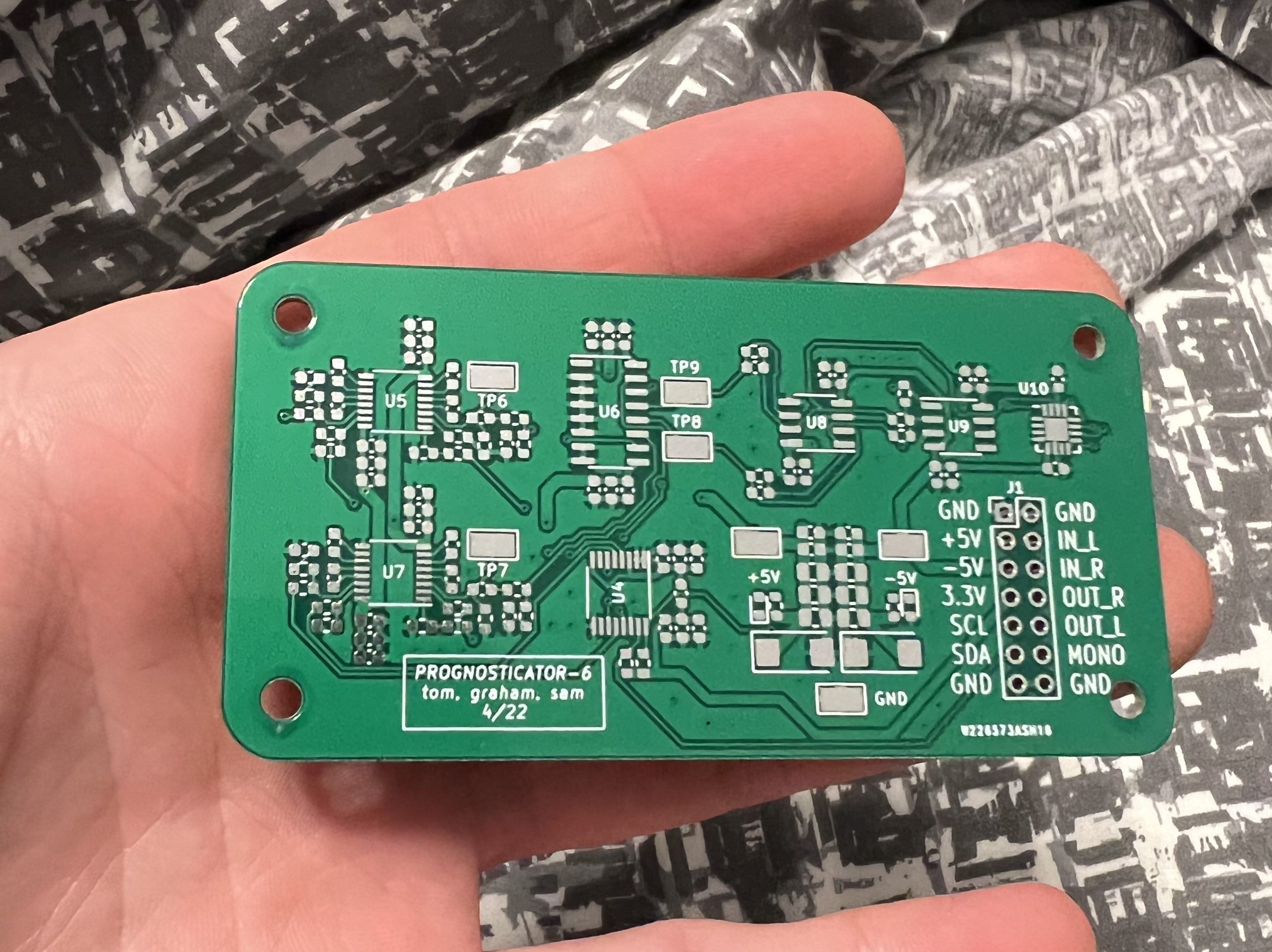

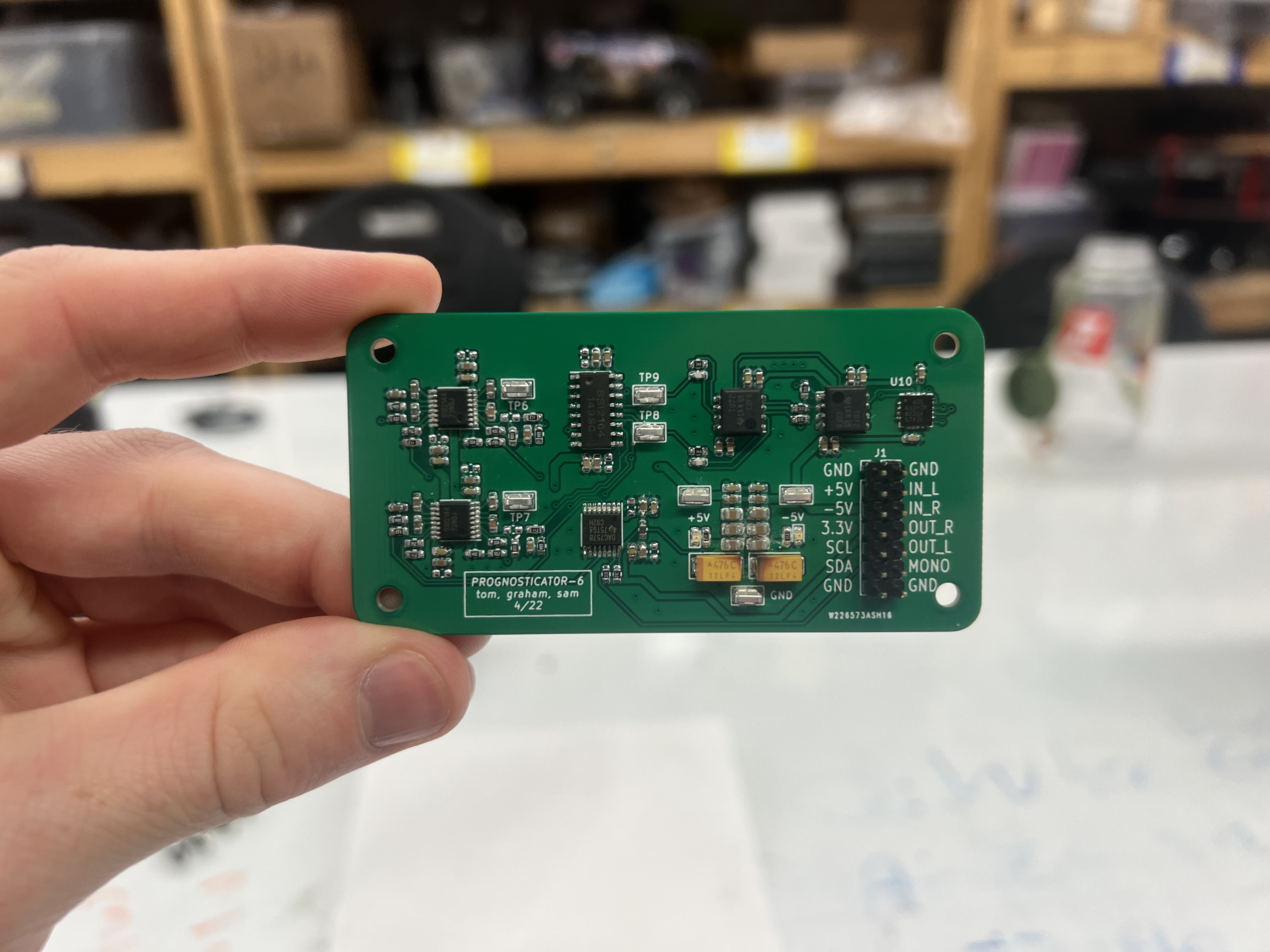

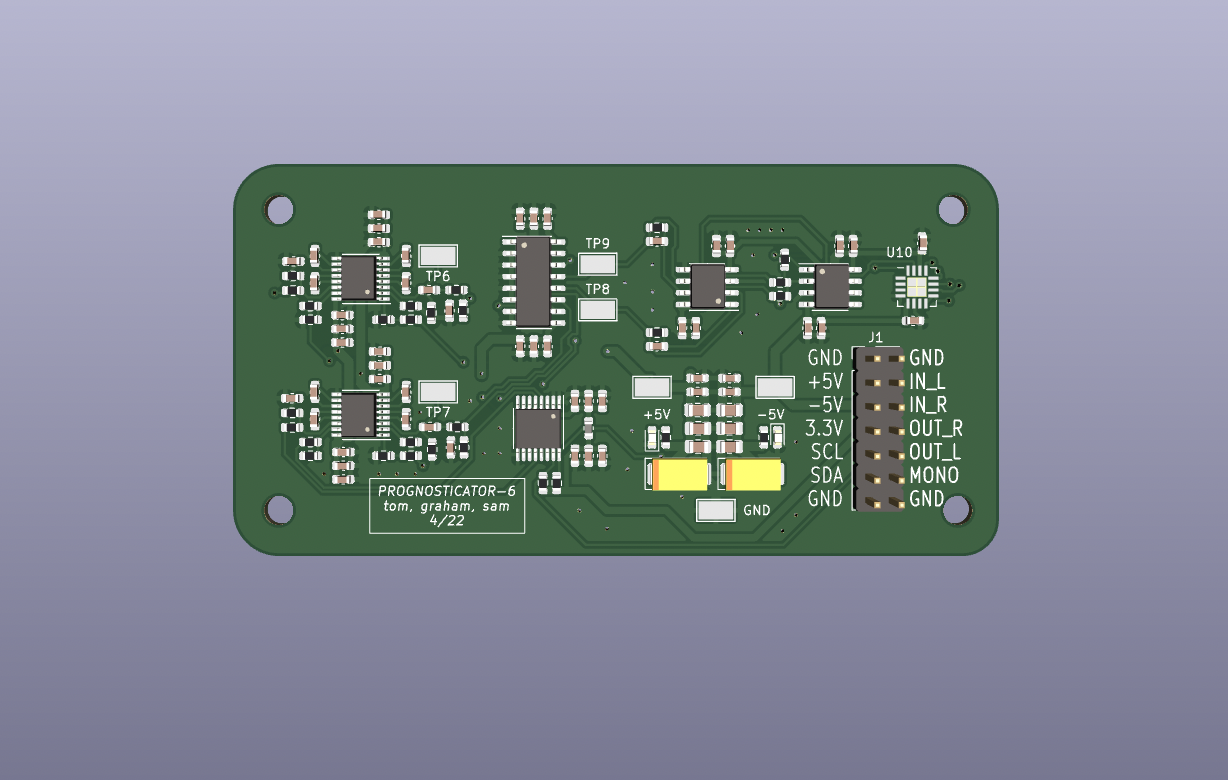

Last week I had ordered the PCBs and said that to stay on schedule I should have assembled boards by the following week (right now). Luckily, the PCBs arrived a little earlier than expected!

Everything looks great and they passed basic electrical tests (power rails not shorted, etc.) Since the Mouser and Digikey BOM arrived a few weeks ago, I stenciled the board with solder paste, placed all the components, and reflowed it. There were a few shorts and tombstoned capacitors that I had to fix manually, but the finished board looks great:

Bring-up was smooth and it’s ready to be fully tested now. I forgot to order 0603 10uF capacitors so there are some un-placed footprints on the board but these are just decoupling capacitors and there is ample capacitance even without those so I don’t foresee any issues. I’ve tested all the basic functionality (control signal DAC via i2c, filters, amplifier) including adjusting filter resonance and cutoff. Everything is working and now I can fully test/characterize it and generate bode plots of the filter response.

This puts us about a week behind, which should be OK because we have a lot of momentum now to push through the final bit of the project. To finish up, next week the deliverable should be a fully tested analog board and integration with the microcontroller.

This week+, I spent most of my time continuing to wrestle with Petalinux, this time 2018.3 which is supported for the Ultra96v2. Unfortunately, despite significant efforts (as in easily 40 hours worth of attempted builds) I was unable to successfully build a petalinux image on my system. I think the only solution is to have the right native ubuntu version as well as using an official Xilinx-supported board, such as the zedboards or ZCU series. I decided at this point to cut our losses and switch gears for the digital side of the system from the Xilinx-Zynq to a raspi+mcu approach which has a far simpler toolchain. The main reason I wanted to use the Zynq in the first place was to figure out the embedded linux build process, but without good mentorship and a working toolchain this is just infeasible in the amount of time I have on top of the rest of the system software and mechanical design.

I’ve already setup simple python scripts on the pi, verified that the display works, and begun to implement the Qt application.

The new architecture for the digital hardware has two possible approaches, depending on the balance between the MCU and Raspi as well as the comms link speed:

The simple option puts the oscillators and filter control onto the STM32, since it’s the only thing the Raspi can’t do (44kHz real-time i2s on the stm32 is simple, but not on the raspi although it is possible with certain modifications). The main issue is that filter/envelope info has to be sent repeatedly over the comms link, which limits the interpolation density for the envelopes if we’re running in real time. I estimated that with the 36 byte payload (6 voices + envelopes + amplifiers) that we’d be able to update oscillators/filters at 320Hz, which should be more than sufficient. We could easily do the firmware for this on a bare-metal stm32.

If for whatever reason we run into issues here, the next step is to move all real-time synthesis to the STM32. This adds significant complexity to the stm32 software, but prevents the need to send anything over comms in real-time.

Additionally, I obtained 2 raspberry pi 3b+ and a raspi 4 to use for the synth, as well as an stm32F4 development board with a built-in i2s dac that we can use instead of the off-system i2s dac. I’ve tested the raspi, but have not tried to get the stm32 toolchain up yet, although I’ve used them before and I’m familiar with the system.

Lastly, I designed the front panel layout for the synth, including final hole positions and silkscreen/laser etching files. Sam is in the process of finalizing the design drawings for manufacturing so there might be a few modifications, but it looks like this right now. (This is at 300dpi, so feel free to open the full image and zoom on the details.)

Next week, I’m planning on working mostly on the Qt application and getting the stm32 toolchain setup. I’ve started working on the Qt application last week, but there’s not really anything to show besides that the toolchain works and there’s placeholder gui elements and some simple frameworks for patch parameters. I don’t think we’ll have any trouble running Qt on the raspi, but I want to test that ASAP as well.

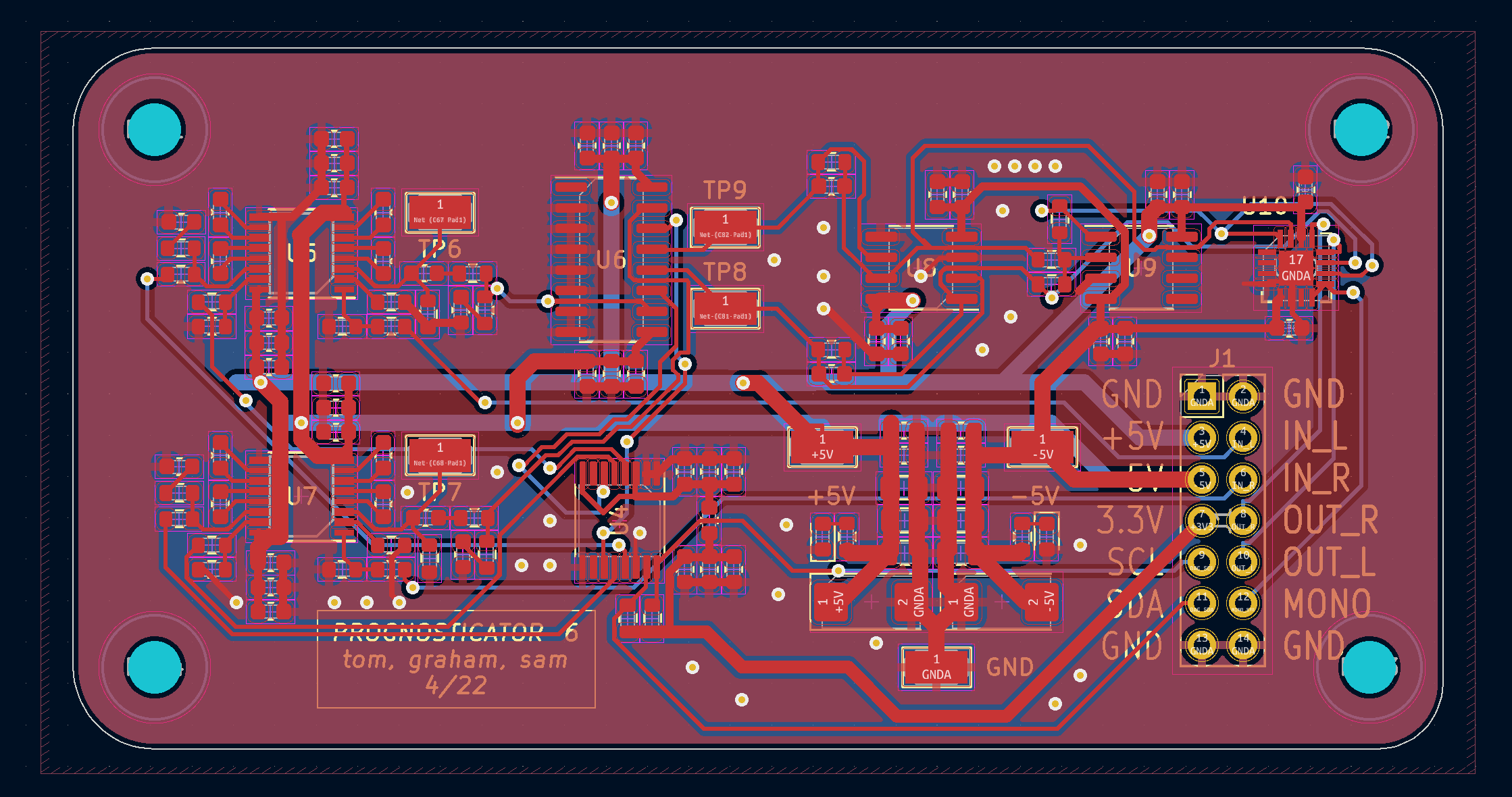

I have completed the PCB layout and ordered the boards from PCBWAY in China. It’s a fairly small 2-layer board with typical design rules and about 100 components which will allow the board house to manufacture it quickly (2 days) and us to solder everything in a few hours.

The chip shortage has caused a number of the components to go out of stock while I was laying out the board causing me to start over. Luckily, we were able to get the whole BOM finalized and ordered for this PCB layout. All parts, including filter ICs, are in-hand. Once the PCB arrives, we can solder all the components (reflow with stencil) and begin bring-up and testing.

Laying out the board took longer than expected and put us a little more than a week behind. To get back on schedule, we need to have the board in-hand by the end of this coming week and verify that all the component footprints are correct. The main deliverable for next week should be a soldered PCB that is ready for testing and integration into the enclosure.

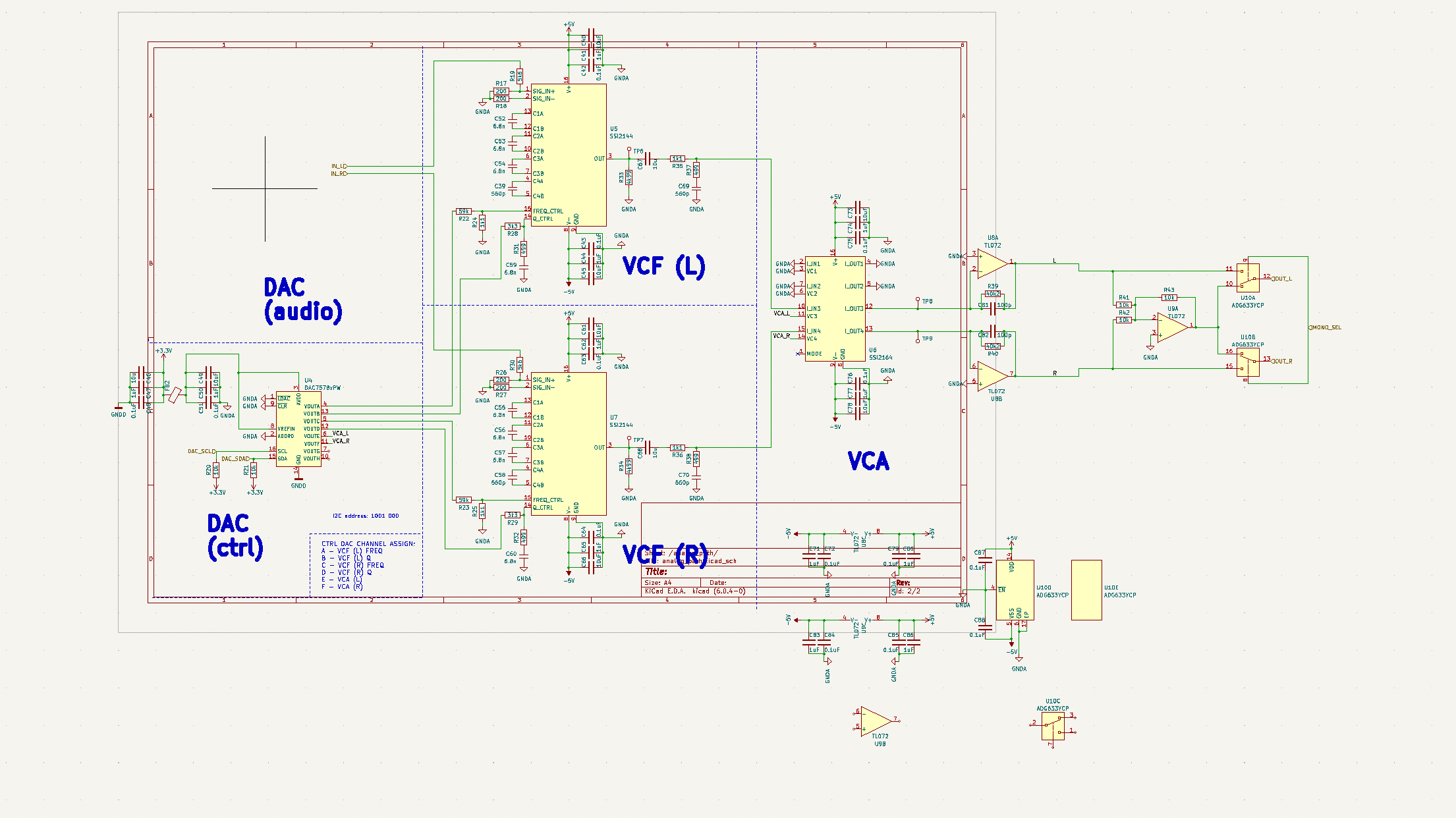

Last week I said that to get back on schedule I would need to create the finalized schematic, component footprints, and BOM. I’ve done all of this as well as ordered the BOM and begun PCB layout.

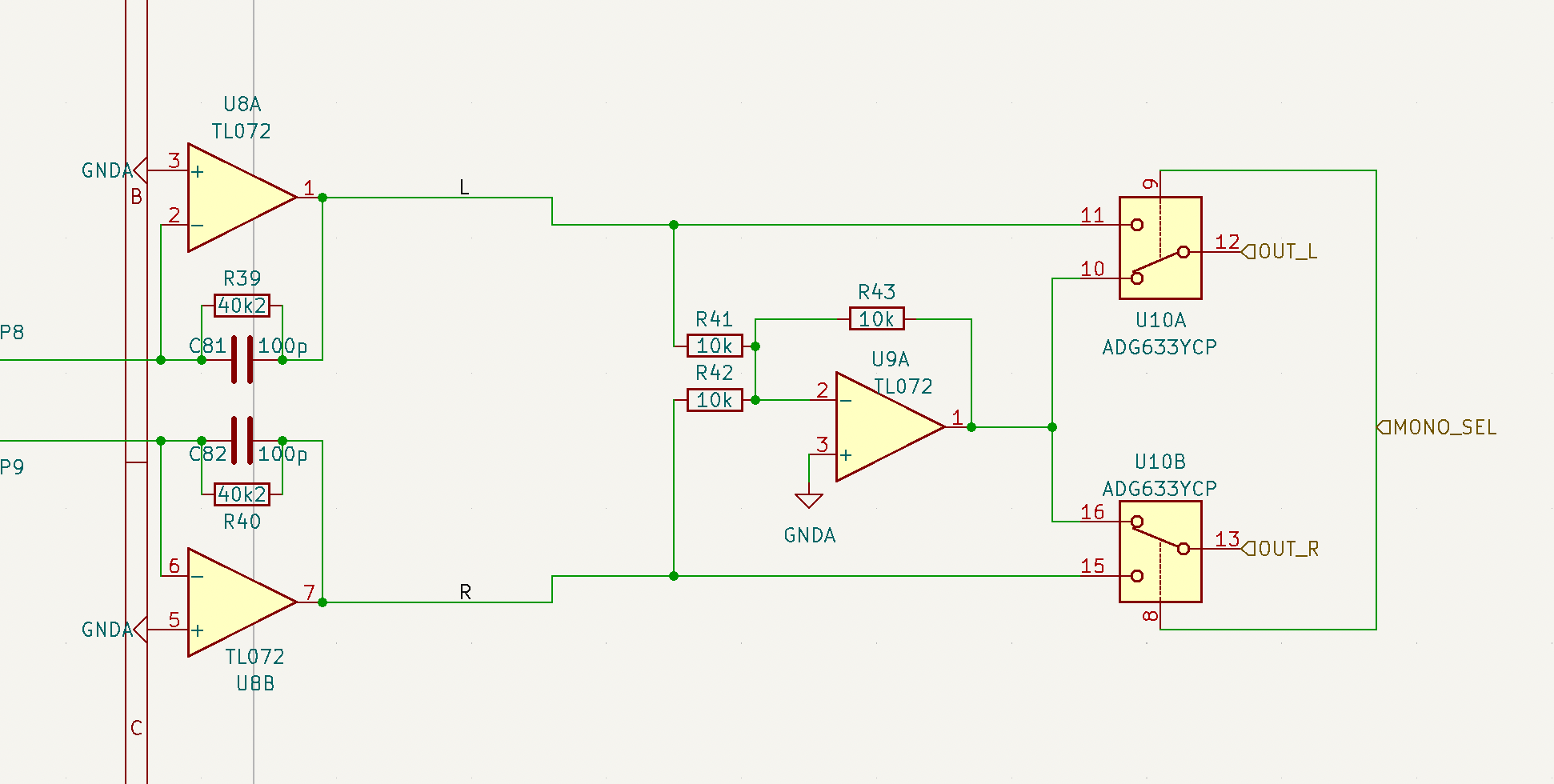

The final schematic does not include an I2S audio DAC as we will use an external DAC breakout board from Adafruit for this. Since this is a 2 channel synth, we want to give the user the option to select for either true stereo output or sum them together in analog for a mono output. I added some circuitry on the right side of the schematic to accommodate this:

Two (right, left) audio signals enter on the left and are buffered. Then, they a bidirectional analog switch mux IC (U10) either selects the raw buffered signals or a summed version at the output of U9. OUT_L and OUT_R are then sent to the rear panel audio jacks. MONO_SEL is the mux control signal.

Two (right, left) audio signals enter on the left and are buffered. Then, they a bidirectional analog switch mux IC (U10) either selects the raw buffered signals or a summed version at the output of U9. OUT_L and OUT_R are then sent to the rear panel audio jacks. MONO_SEL is the mux control signal.

I have also begun the PCB layout. This puts us almost on time, but still lagging a little. For next week, I need to complete the entire PCB layout, pass DRC, and prepare to order it from PCBWAY.

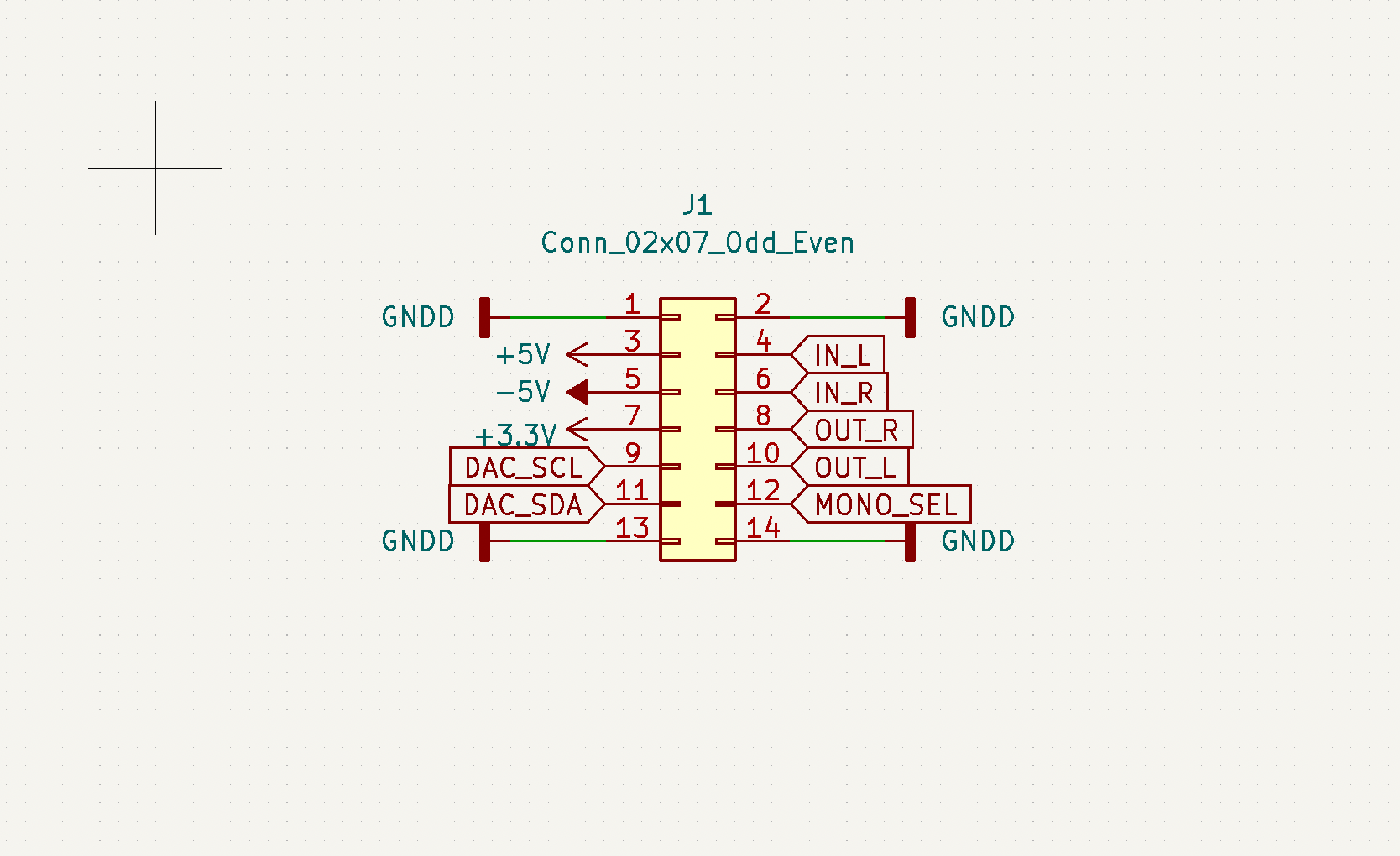

This week I went back to the schematic design for the analog PCB and redesigned the interface. Since we may switch FPGA boards (PYNQ-Z2 to Ultra-96) to have better documentation and support, it will be beneficial to make the analog electronics more flexible and agnostic of input signal format. So, instead of having a 40-pin header that perfectly matches the chosen FPGA board’s GPIO, I modified the interface to have a smaller header that will not directly connect to any FPGA or microcontroller board but rather can be manually wired to accommodate any digital controller.

DAC_SCL and DAC_SDA are the serial signals to the control signal DAC which drives VCFs and VCAs. MONO_SEL is a control signal that will select between stereo or mono (summing) outputs. And the remaining pins are audio in and out. This simplifies the analog board interface and treats it more as a filter passthrough board.

This backpedaling and questioning if we should change FPGA boards puts about a week behind schedule. To get back, next week I need to finish the stereo/mono summing circuitry and make board design progress. The main deliverable for next week is finalized schematic, BOM, and component footprints.

Things went very well this week! we switched board from the PYNQ to the Ultra96. This last minute decision was admittedly a little desperate, but it has really facilitated our project progression. I tried to no avail to integrate MIDI with the Ultra96 this week. However, I am understanding more about the board UART input and should be able to finish this next week.

My todo list for the next week is to: Work through Ultra96 Vitis and Vivado basics, integrate MIDI input with the Ultra96, finish and fab the front panel, and solder many more encoders onto I2C boards.

Currently we are behind schedule, but if I can complete my list, and we can order a pcb, and get started with the oscillators. Then we should be able to finish most of sound synthesis the week after and be back on schedule.

Well… rest in peace to the PYNQ. We have decided to make the last (viable) minute board switch to the Ultra96. We have already made more progress on the Ultra96 than we ever did with the PYNQ which is promising. There is much more documentation on the Ultra96 than the PYNQ. This board switch will truly help us move along our project and kickstart our sluggish development phase.

There are a few risks we must handle carefully. If our first PCB iteration does function as desired. We may not be able to print a second iteration in time and could lose our analog pathway. Our first attempt must be our absolute best. Everything else should integrate nicely.

This coming week, we will begin wave synthesis. Sam will finalize and order the PCB, Graham will integrate MIDI and finish the front panel, and Tom will work on synthesis modules on the Zynq.