H4AR Product Pitch

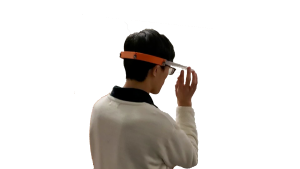

H4AR is a device for assisting hard-of-hearing people in their everyday lives, by visually alerting them about the direction of important sources of sound. The device is a wearable pair of glasses, equipped with an array of microphones and augmented reality through a heads up display. This microphone array will identify human speech, and determine the direction of the sound. This information will be displayed as a visual cue in the form of an arrow on the heads up display, greatly increasing the individual’s peripheral awareness by allowing them to identify and turn towards people talking to them.

You can watch our final video here

Ram’s Status Update – 6 December

Tasks Accomplished

(We worked together on most things this week so all of the following was done together with the other two team-mates.)

- Froze code

- Did the angular resolution test, latency test, noise test; analyzed results

- Cut and glued the face-shield onto the device and adjusted it for comfort

- Made another video, to use for the final presentation

- Finished up the final presentation

Deliverables for upcoming week

- Make the final demo video

- Do the final report

Will’s Status Update for 12/6

Tasks :

- Full integration complete

- Ran compass tests (measure at known angles, check whether prediction within error bounds)

- Ran latency tests (difference between hear sound and symbol appears)

- Prepared for final presentation

Deliverables:

- Video

- Blog Post

- Final Report

Leon’s Status Update for 12/6

Tasks Accomplished

- Full integration complete – all parts assembled into a fully functioning standalone package

- Accessible SWD ports for flashing new firmware

- USB-C for charging the battery

- Ran battery test for 12 hours before pulling the plug, far exceeds our target metric

Deliverables for upcoming week

- Make video and blog post

Leon’s Status Update for 11/29

Tasks Accomplished

- Finished assembling the display module and battery/compute modules

- Put together the wire harness for the mic array to interface with the other modules

Deliverables for upcoming week

- Finish integrating with the mic array

- Figure out a way to flash new firmware versions onto the board without taking apart the module

Team Status Update 11/22

Tasks Accomplished:

We accomplished a lot of hardware iterations and fabrication this week. Software also made decent progress in being able to handle a 4 mic setup with a clear path forwards for a list of software fixes necessary to improve the precision and such. Since there is strong similarity between the prototype in-use and the final wearable, we are confident that the final integration will not require too much difficulty.

Potential Risks:

We are now getting really down to the wire on time. The biggest risk is that at this stage, any bugs will likely have to be software fixes/workarounds due to the hardware turnaround time (and also the unfortunate closure of the makerspace due to COVID cases). There is also not much time for another iteration round so the integration results early this week will be very crucial.

Will’s Status Update 11/22

This week saw some good progress on the software side. Most of this was done with pair-programming with Ram.

- Input audio form correction. Previously each mic input was biased from 0 by a certain amount. We adjusted each audio waveform such that it is centered around 0. This means that as far as the acousticSL library is concerned, the audio activation level would be consistent for all waveforms (i.e no 1 waveform is arbitrarily larger or smaller than the other).

- Integrated the 4 microphones into acousticSL (through multiple library and callback instantiations). We also derived an ad-hoc implementation for reconciling the output angles of TDOA on two pairs of microphones into a single output angle (through a mix of adjusting to a common reference angle and then taking the measurements that are consistent from both microphones). The ad-hoc implementation is taken with reference to a parallel line going through one of the mics so this further refinement.

- Augmented the square sums smoothing for both the raw input angles and also the converged angle from multiple measurements.

- Rough latency test is promising; precision test is still worrisome

To-Dos for this week:

- Robustness to noise and adjustments for precision (at least get to 30 degrees for the OLED screen)

- Increasing the sampling frequency; balancing smoothing and latency (i.e how much to smooth, how long to smooth)

- Add a third pair of mics (possibly adjust the physical arrangement) to improve angle resolution

- Move data processing logic gradually into callbacks — this might allow us to be more lock-step when interacting with acousticSL

Some geometry of interest:

Leon’s Status Update for 11/22

Tasks Accomplished

- Finished part iteration of the display module, as well as the design of the battery and compute module. The design now takes a smaller footprint, if we have enough time to spare one more stepping, I can further distribute the parts in the battery/computer module but that seems unlikely as of now

- Fabricated the display module and battery/compute modules

- Much higher resolution than previous print, used the form printers this time around

- Had to start a makeshift isopropyl alcohol washing setup since the parts were not properly washed on pickup

- Also had to create a makeshift UV curing station since there was not enough sunlight to cure the parts quickly

Ram’s Status Update: 11/22

This week, we made some good progress on the software side (Will and I pair-coded for most of this).

- We did some code clean-up that made transitioning to 4 mics easier

- Geometry: we did the math to figure out how to reconcile the output angles of TDOA on two pairs of mics, and implemented this

- We debugged the existing solution for smoothing, and improved it some more, to balance the trade-off between latency and smoothness

- Did a rough overall latency test which was promising

- We still do not have 15 degree precision based on some rough tests, so we will need to work on that

- Unrelated: Found a potential fix to my laptop issue

To-Dos This Week:

- Make it more robust to noise – if there are very loud and close-by keyboard noises, it currently does not work

- Adding a third pair of mics – either an actual third pair in between the current ones, or a “virtual” third pair that uses data from two mics that are currently part of different pairs

- Increasing the sample frequency – we don’t really need to yet, but we anticipate that we might need to do this soon (so that we have more breathing room in terms of latency, and less lag when transitioning)

- Move a lot of the code currently in the main while loop into callbacks – the advantage of this is that these callbacks can’t be interrupted so the volatile variables that we change will definitely not be modified in the middle (currently, this isn’t a problem but it could be so it’s probably good to do this move)

- Smoothing Improvements

- Account for 0 essentially being the same as 360 – so that the smoothing algorithm takes into account the fact that changes from e.g. 3 to 359 are actually fine, and these are still smooth

- Implement a feature where if we haven’t seen 10 angles in a row, we just cut off all our prior sample knowledge – because those priors are likely unreliable