Roshan Nair – Weekly Status Update #11

Since this is the last week before the public demo it has mostly been about wrapping up on effects and cleaning any minor bugs so far. Delay/Echoing is complete with a variable delay however it seems to clip very briefly when changing from a short delay to a very large delay for some reason. I am still looking into fixing that. Additionally the goal is to also add Reverb which I am currently working on now. These will require the addition logic to the memory controller to arbitrate between requests since it is shared between modules.

Team A0 – Weekly Status Update #11

This week we are finalizing our project for demo day. We’ve recently expanded our effects list to include band pass filters, pitch shifting, and echoing. As a result we now have most of the effects we planned on implementing which is a success for us. Before demo day we want to make sure that any amplification to specifically quiet effects is done so that they can all be heard clearly on demo day.

Nick Saizan – Weekly Status Update #11

This week I made the framework for a chorus effect module. This effect involves the addition of the original audio with a delayed version of the audio. The delay however is variable, and in this case modulated by a triangle wave. They delay is achieved using a BRAM instantiation since a maximum of 30ms of audio is required. Currently I am in the process of running simulations on the module to make sure it behaves as expected, afterwards I plan on doing synthesis tests to see how it sounds, once I’m sure the datapath is working correctly.

Nick Paiva – Weekly Status Update #11

This week I worked on frequency filtering, pitch shifting, and a sine function look up table.

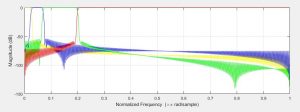

The frequency filtering is a 512-point FIR filter, and as such the cutoff frequencies are pretty sharp. I’ve calibrated the filters to isolate different parts of the audio track. It leads to some very interesting effects. I generate the impulse response using MATLAB, and then turn it into a lookup table for the FPGA to use. Here is a plot of the filters I am using:

Pitch shifting is a basic time domain implementation. I used the BRAM to set up a ring buffer. Data goes into the ring buffer at the audio sampling frequency, but the output pointer moves slower or faster depending on the pitch shifting selected. Linear interpolation is used for fractional samples. I did not expect this implementation to sound very good, but it actually works pretty well. Using the filtering block to filter out the lowest frequencies of the track before shifting makes it sound even better.

The sine function LUT is going to be used to make a new amplitude modulation effect. It is almost complete, but the interpolation between samples is not quite working just yet. When it works, the new effect should be trivial to implement.

Nick Saizan – Weekly Status Update #9

This week I wrote the tremolo effect module and got it working in hardware. When I was planning this effect I considered using a sine wave for modulation, however with our given time constraints it seems more reasonable to use a simpler square wave for modulation. It still creates a cool effect but requires much less time to put together. After testing in simulation I brought the module to hardware and resolved some timing bugs, ultimately making the effect work reliably.

Nick Paiva – Weekly Status Update #9

This week I worked on updating the display to use the onboard block ram, wrote a new effect, and tried to get the FFT working in simulation.

The reason I updated the display driver is because using LUTs dramatically increased the compile time. Changing it to use M10k was relatively simple.

The new effect I wrote tries to emulate the clipping we were seeing with our older code. It is called the “deep fry” effect.

Interfacing with the FFT seems to be relatively simple. It uses a simple ready/valid interface. But, I cannot get it to compile properly in simulation. I spent all day today trying to get it to compile in various simulation software packages to no avail. At this point, I will either abandon the FFT or try to get it working through synthesis alone.

Nick Saizan – Weekly Status Update #8

This week was mainly two things: First I was finishing up the midi decoding interface so that we could effectively use it to apply our affects to the audio stream. It has been working very well since then. The other task I’ve begun is starting the amplitude modulation effect. the plan is to use key velocity to create the modulation frequency and to use the after-touch pressure to change the amplitude/depth of the modulation. This way a high energy key press will give a high energy modulation. And the same with a low energy key press.

Roshan Nair – Weekly Status Update #8

This week was finalizing some of the bugs faced with bit crushing and panning with MIDI. Additionally I have started the verilog and microarchitecture for echoing which is currently being tested in simulation but will soon tried on the FPGA using the SDRAM chip. The MIDI keyboard will be able to dynamically change the delay of the echo based on the pressure.

Additionally I helped Nick P. briefly for debugging the display controller.

Team A0 – Weekly Status Update #8

This week we worked on polishing off the MIDI decoder, some simple effects, and the display driver. The MIDI decoder is currently working very well, and we are able to use it to control our effects. The display driver is still in the testing stage, but it will not be difficult to get it to print out more interesting information. Overall we have made a lot of progress this week.