The global spread of the coronavirus has truly changed our lives, transitioning us to a remote environment where contact with others is limited. This has impacted not only the way we work but also our ability to stay in shape. This has led to a rise in the popularity of at home workout options that range from free fitness applications such as Nike Training App to high-end systems likeMirror. We’re introducing Falcon the Pro Gym Assistant (FPGA), a revolutionary and affordable workout system that guides a user through a customizable workout plan while providing accurate real time feedback.

Vishal Status Report 12/5

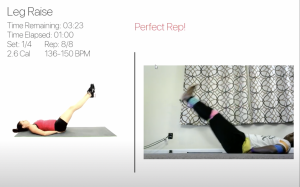

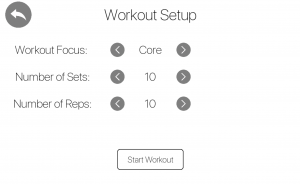

This week I mostly worked on making sure the UI was in a ready state to present for our demo on Wednesday. The main task that I had to tackle in terms of the UI was the main menu which is used to link the various pages within the UI. I also worked on creating the workout setup screen and settings screen in which the user can customize their workout as well as their profile information. The next thing I did for the UI was enable profile switching and save unique states for each user within the sqlite database. The final thing I did was clean up the overall flow and details for all the pages and made sure that different aspects were integrated properly.

After the demo I worked a bit with the spotify integration. I have a log in menu for each profile and then I started working on the UI aspect of spotify. This UI will give the user the ability to play, pause, next song, previous song, as well as see the current song they are playing on their device. I have a bit more work to do to actually get it to display but the button logic works properly with the API.

In the next weeks I will be working on creating clips for Albert that will demonstrate the UI and features we were able to implement after the recording we had before thanksgiving. After that I will be finishing the spotify UI buttons and have it properly display. In addition I will be preparing for the presentation this Monday and then start on the design report.

Team’s Status Report for 12/5/20

Our main priority for this week was working on the demo. Since we are all back home and are in different time zones, it was fairly hard for us to meet but we were able to effectively plan and practice for our demo. We were able to focus on any final touches needed for integrating our final project and were able to successfully fully demonstrate our progress and project during our demo. For the upcoming week, our main priorities are working on the video and the final presentation.

There were no major risks identified this week and our schedule and existing design of the project has not changed at all.

Albert’s Status Report 12/5/20

This week I added audio Feedback to our application. Instead of only returning written feedback, our application would also return audio feedback. Since the user may be doing a leg raise or pushup and may not be looking at the screen, the audio feedback would allow the user to perfect his or her better. I converted some text to mp3 files and changed the outputs sent to the User Interface. I used Amazon’s Joanne as the audio voice. In terms of the feedback that we received for the live demo, I looked into the skeleton feedback and realized that we I would have to redo a lot of the posture analysis as well as the image processing because we don’t really have the opportunity to re-record the entire workout. Therefore, the best I can do is to feed our current screen recording into the algorithm. However, the screen recording does some processing to the live feed, so the HSV values are not consistent with what it was originally. We realized that it may be too much work to re-record since we are all in different physical locations.

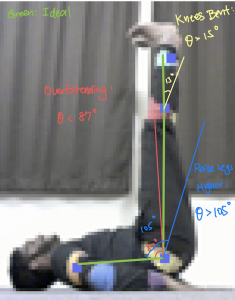

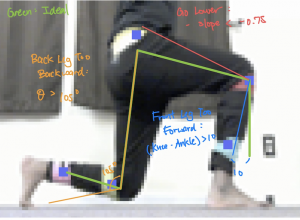

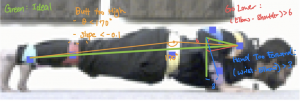

Since the final presentation is this week, I had to create the slides and organize the metrics and testbenchs that I have created in the previous weeks. Also, I am mostly in charge of assembling the final video, so I planned out the time stamps for each section of the video. I distributed the tasks to Venkata and Vishal for them to give me short clips of their portions. I generated diagrams for the posture analysis (shown below).

I also edited the code so that it saves images of what the binary mask looks like after every significant step. These diagrams will help me record the technical portions of image processing and posture analysis. I played around with iMovie to get familiar with it. I created an Ending scene and have started to cut and edit the videos that we want.

Next week, I will mainly be focusing on generating the video. The following week will be to complete the final report.

Venkata’s Status Report for 12/5/20

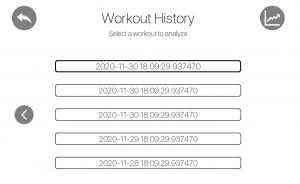

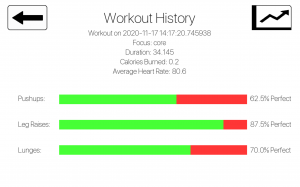

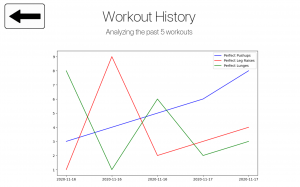

This past week was focused on working on the UI to be able to demo. I first focused on adding additional features such as the functionality to navigate through the database by adding navigation buttons to grab the appropriate entries as well as adding more functionality to the history trends page so that it combines workouts of the same day and standardizes the y axis. I then focused on cleaning up the UI by creating new icons and adding the ability to highlight the buttons/icons when the user hovers over the various options.

After working on the UI and cleaning up the Workout History Summary and Workout History Trends page, I worked on trying to integrate Spotify into our project. After learning about Spotipy, I was able to create a Developer app that connected with a user’s Spotify account to allow app to modify the user’s playback state by pausing/playing/skipping the current song and allow our app to identify the name of the current song. We were also considering cleaning up the authentication flow but after our demo, we decided that we should focus on other aspects and so, I simply worked with Vishal to create a simple UI for the Spotify section and began working on the other deliverables.

I am on track with the schedule. For the upcoming week, I plan on focusing on the various deliverables such as working on the final presentation and providing Albert with the various video/audio files he needs to successfully integrate our various sections for our final video. I will then work on the final report the week after that.

Vishal’s Status Report for 11/21/20

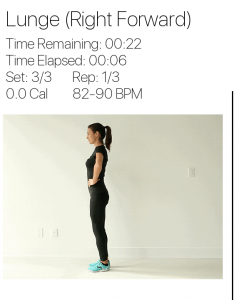

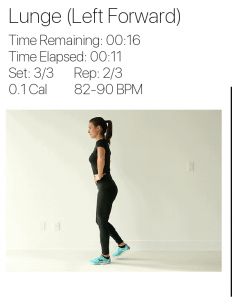

This week I made a lot of progress and headway on the project that has made the overall project a lot more robust. I started off the week refining the changes I made last week for displaying the feedback from the FPGA. It turned out that when the entire project was run together with the hardware as well as the signal processing the timing for the feedback was a little delayed especially for pushups. I had to work on editing the gif’s and reimplementing the timing for the workouts so that the final rep of a set had more time remaining so that the user could properly read their feedback before moving onto the next workout. After fixing up timing for the different workouts and more specifically the pushup exercise I moved onto working on implementing a second type of lunge and fixing up the gif for the lunge.

We originally had the lunge implemented for the right leg forward but we worked on changing it so that two different types of lunges are shown with also a version where the left leg is forward. With the help of Venkata I was able to apply the new gifs since the old ones ended up being too pixelated and did not flow cohesively in the user interface. In order to accommodate both types of the lunge I had to refactor some of the code and implemented new logic.

I worked on making sure that the images are captured periodically in a proper manner and made sure the scaling and coloring for them were proper. The frame through opencv was given in blue scale and I had to cast it in order to be consumable by Albert’s signal processing as in the past we have been using photos from a saved folder that was taken by an external webcam application.

I’ve wrapped up this week working with Albert and Venkata to record some workout footage that we will be using in our final demo as we will all be heading home next week for Thanksgiving. We had some issues integrating so I spent a little bit of time making sure those bugs were cleaned up.

In the upcoming weeks I will be making the main menu which will connect all our different pages together and then I will be integrating a profile customization screen.

Albert’s Status Report for 11/21/20

Earlier this week, I was working on refining the posture analysis on the extra 30 or so images that we captured last week. I changed the HSV value for the shoulder and wrist so had to edit it on the HLS code as well. The posture analysis was also fine tuned. I also handle unlikely errors that cause the program to crash because there might be duplicate points for angle calculation.

This week we wanted to video the workout portion of the project as a whole because everyone is gone for Thanksgiving and would not be back in Pittsburgh after Thanksgiving as well. Therefore, it means that the FPGA, webcam, application, and posture analysis section have to be integrated entirely. We set up everything to do the recording; however, things didn’t went well as expected. The pictures I used to get when I did the fine tuning of the HSV bounds is directly from the camera. However, in order to present the live feed from the webcam, the application uses OpenCV, which does some processing on its own. Vishal had to do processing to change it back to the original image. However, there is still a difference between the saturation of the images I directly get from the camera and images captured and stored through OpenCV. I had to spend more than two hours to pinpoint every joint and fine tune it again due to the discrepancy between the images I currently receive and used to receive. Since I had a test bench and some functions written to speedup the process, it took a lot faster than without the classes and functions I wrote previously. Also, we decided to test the image processing portion without the dark suit that we built earlier. It also took a longer time to get rid of the noise from their different colored T-shirts and pants. While doing the final fine tuning for the video, we decided to reuse colors of the trackers because certain colors are easier to track than others. Another problem with the program is since the workout is a live movement, a lot of the darker colors get blurred out. In the picture below, the red becomes a lot lighter than normal and sometimes it turns into light green for some reason. Since we originally anticipated using 8 joints but only actually needing 5, we pinpointed the mutually exclusive joints for the workouts and made them the error prone colors to less error prone.

Also, for some reason, the camera reversed the left and right so some of my posture analysis gave back incorrect results that took a while to realize and debug. Since everything was on the main application and we were running it as a whole, it was pretty hard to isolate the bug and realize that the camera was flipped.

Next week is Thanksgiving and I would be flying back to Asia, so I would not have a lot of time to work on Capstone. However, I will probably try to work on the audio feedback portion by sending audio recordings from online to the application.

Venkata’s Status Report for 11/21/20

This week was predominantly spent on working on tasks not focused on the hardware. Since I was able to meet our performance requirement for the FPGA, I decided to help out with the software side, specifically by working on parts of the UI that didn’t directly interfere with Vishal’s work. I decided to focus on the pages that provide workout history to the users. I first came up with a couple of mockups and after discussing with the rest of the team, I decided to start coding up the various pages. I had to learn how to use the needed python libraries (Pygame and Matplotlib) and the existing codebase for the python application. I then was able to create the following three pages that pull the appropriate information from our database and display it to the user.

- Workout History Options – Users will be able to navigate the database and pick a workout to analyze

- Workout History Summary – Users will receive a detailed summary of a selected former workout

- Workout History Trends – Users will receive a graph analyzing their performance over the past 5 workouts

I am on track with the schedule. For the upcoming week(s), I plan on working with Vishal to fully integrate the changes that I made and work on refining the various pages to ensure that it is visually appealing. I will also do some more testing with the FPGA and integrate the new changes that Albert made to the image processing code.

Vishal’s Status Report 11/14/20

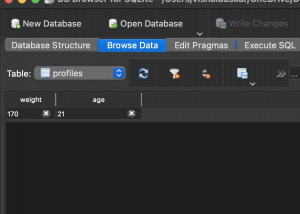

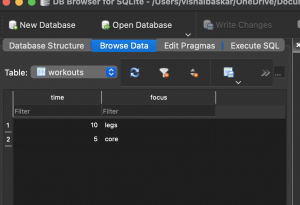

This week I was able to make a good amount of progress for both the integration and the database aspect of the application. For the database I was able to create the database through python code and have two tables setup. One for profiles and the respective biodata and one for keeping track of workout data. Both of these tables are integrated into the UI code. At the end of workouts the statistics about that specific workout will be loaded into the database. Currently there is only one profile but I will be integrating the profile switching in the upcoming weeks. Here is a look at the database schema.

In terms of integration I now have the feedback received by the application and displayed at the correct time in the UI. In the upcoming weeks I will be making sure that the UI is working well overall and refining things that looks buggy. It will be inspected and tested using visual inspection for the most part and a bit of test frameworks.

Team’s Status Report for 11/14/20

This week we were able to complete our MVP that entailed basic integration by the time of our demo. We were able to present a product that was able to read in a random image, stream it to the FPGA, grab the feedback and display the appropriate feedback in Terminal fully synced with the User Interface. We also spent time collecting more images to help us train the posture analysis portion and ensure that we are able to provide the appropriate feedback while also testing the image thresholds for the various joints in various lighting conditions.

This week, there were no changes made to the overall design of the project nor any major risks identified.