Our Work

Software

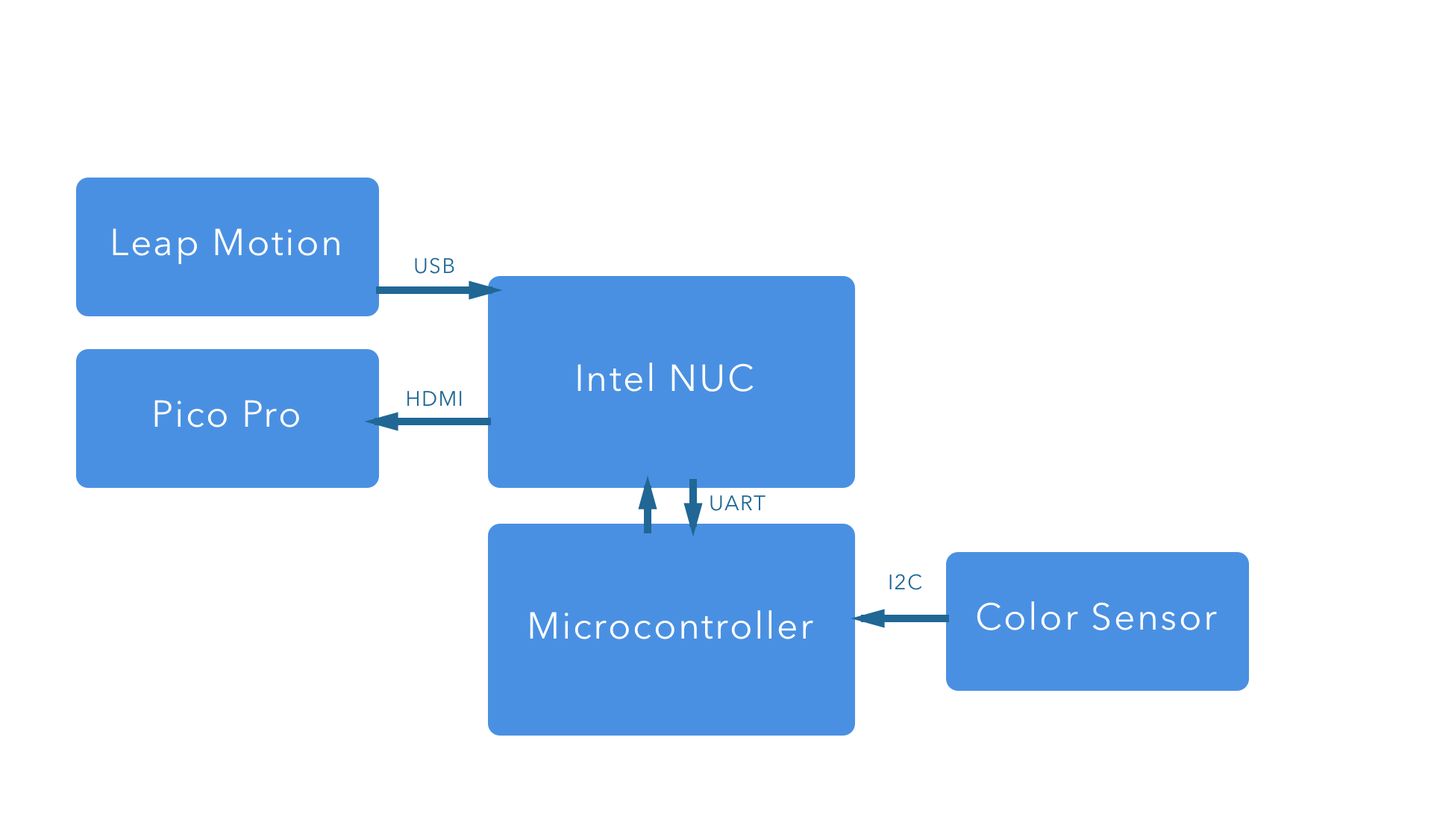

The primary software running on the SlapScreen is written in node.js. This language was selected because of its platform independence. We were easily able to test prototypes using several different devices, including the Raspberry Pi, using the same software stack.

The primary applications were run as an Express.js server, which could be accessed from any web browser. Bleno was used for receiving commands via BLE from a mobile iOS device, and socket.io was used in order to update the application in real-time. Serial communication, perspective transformation, and business logic were also written in a combination of Node.js and front-end JavaScript.

Mobile

A mobile application was written in Swift 3 for iOS. It included the ability to set user preferences of applications for surfaces, change active applications, and modify the perspective transformation angle. All configuration with the SlapScreen was done over BLE using CoreBluetooth.

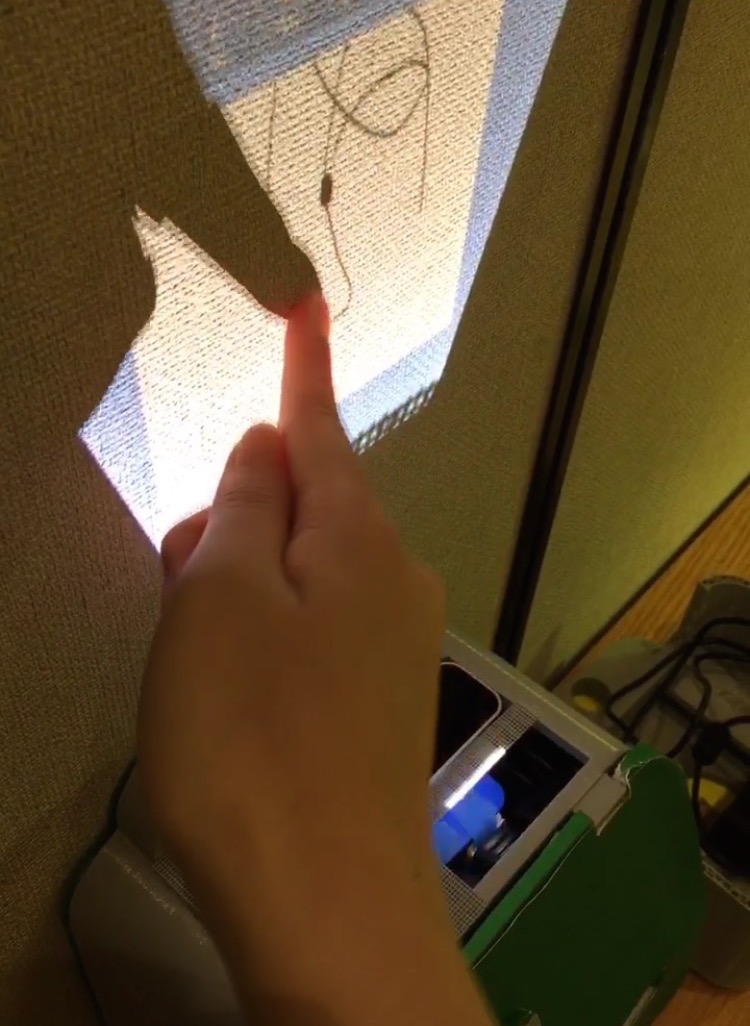

Perspective Transformation

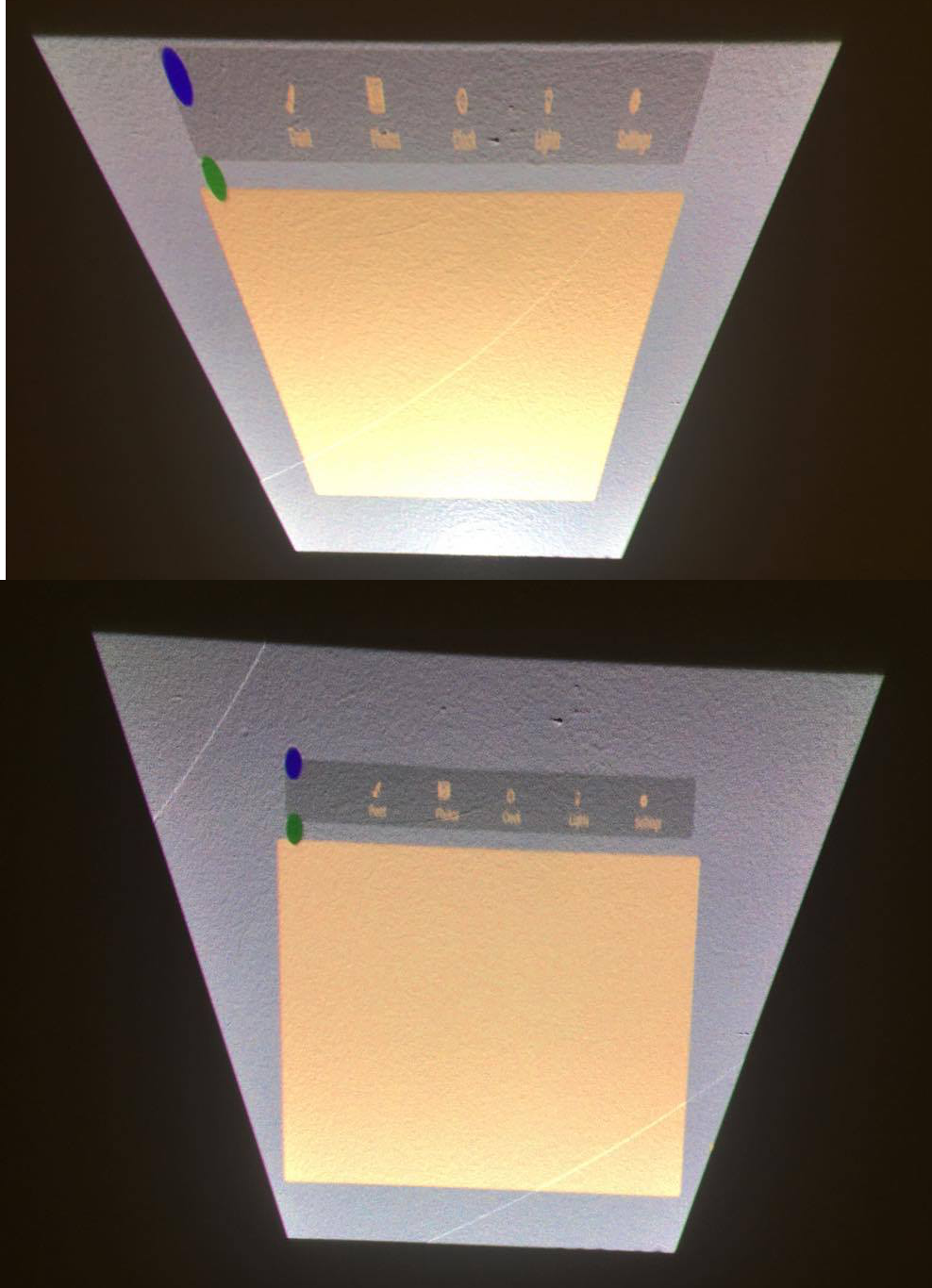

In order to minimize the height of the setup, we decided to angle the projector such that it and the surface were not perpendicular. As a result the image displayed suffered from the keystone effect, where the image appears trapezoidal, as opposed to rectangular, due to the misalignment of the projector. To correct this we applied perspective transform to the view using a matrix transformation. This also applied to the input coordinates as we had to transform them from the absolute values the Leap provides to the modified coordinates.

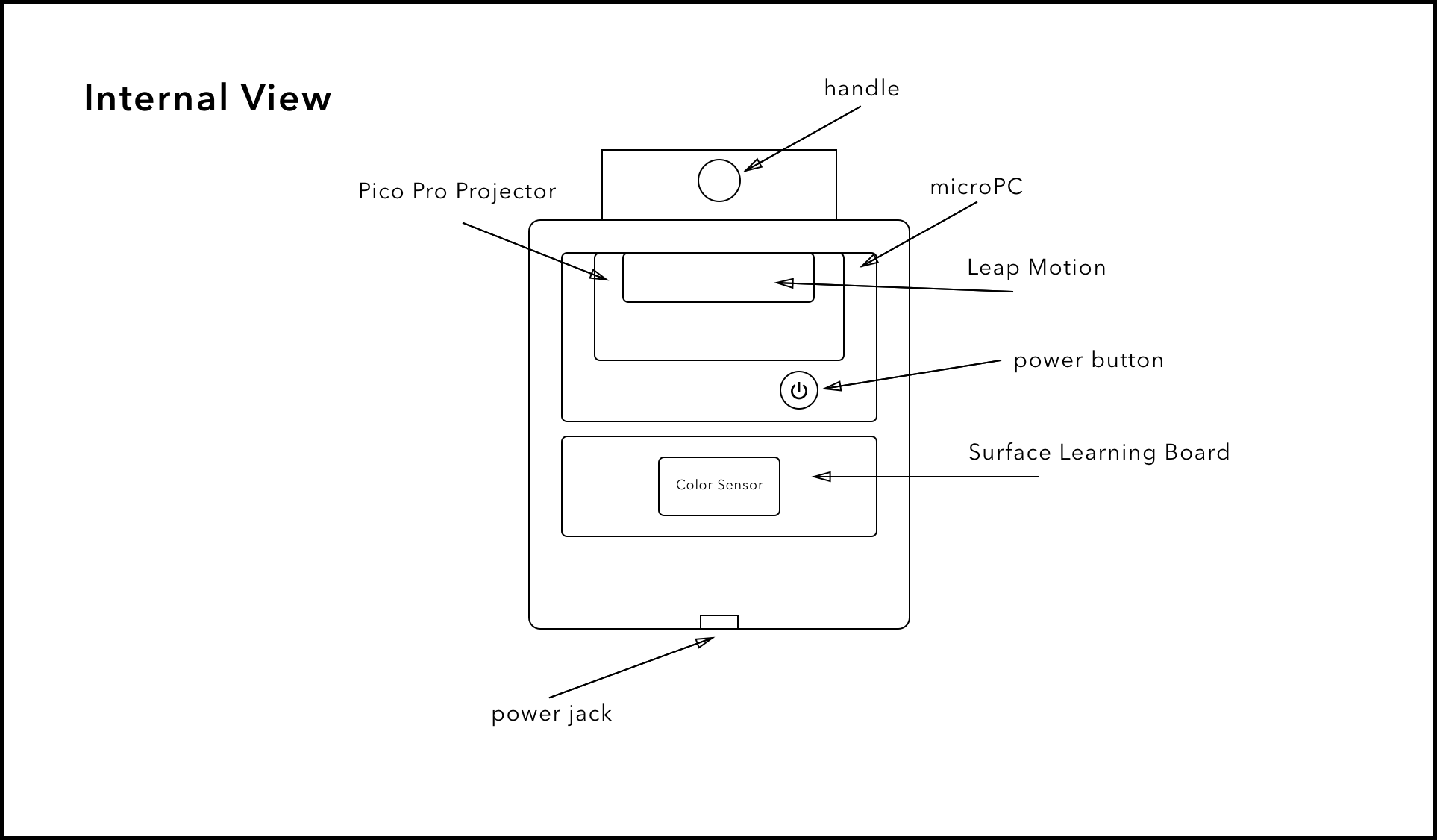

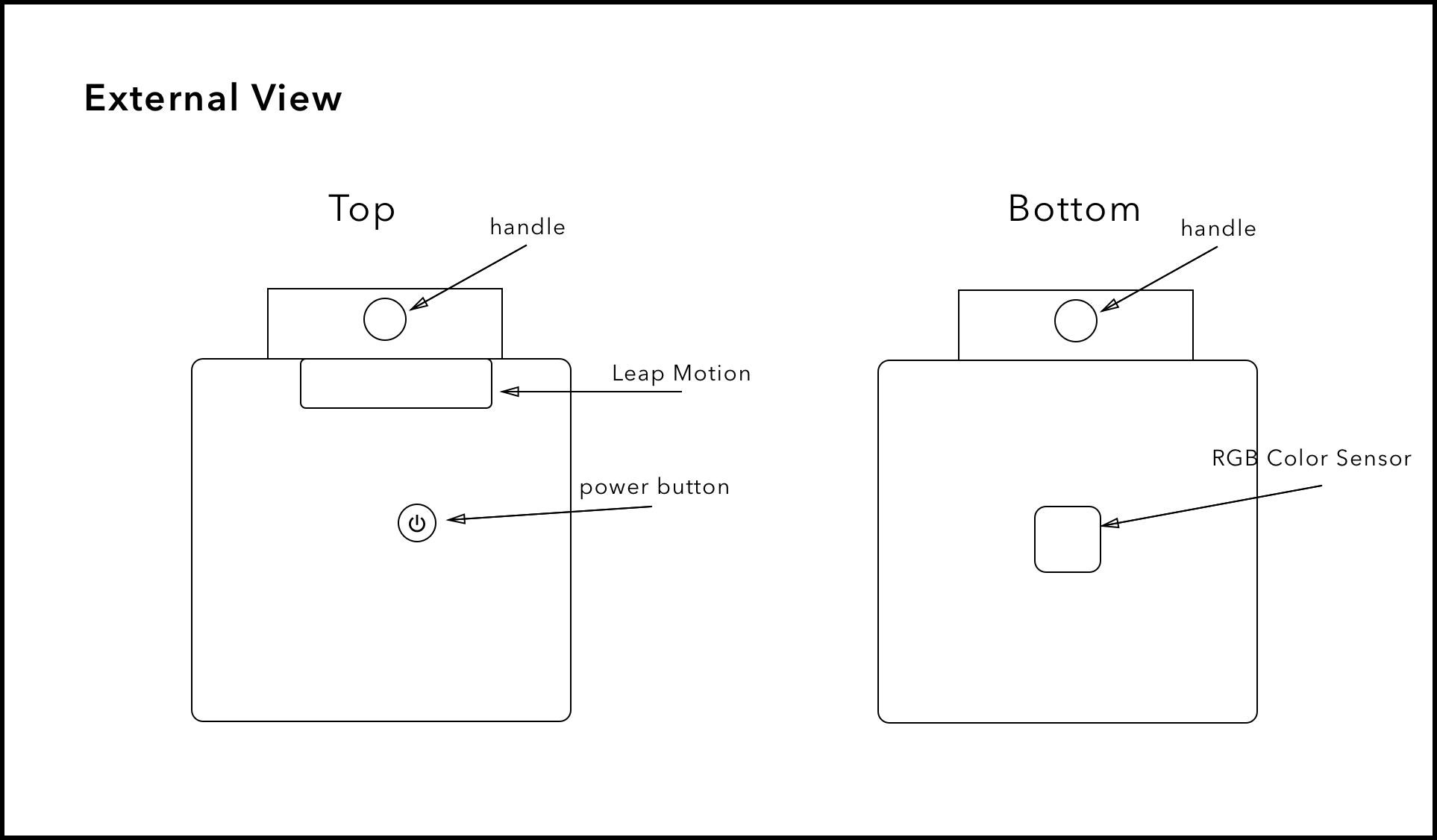

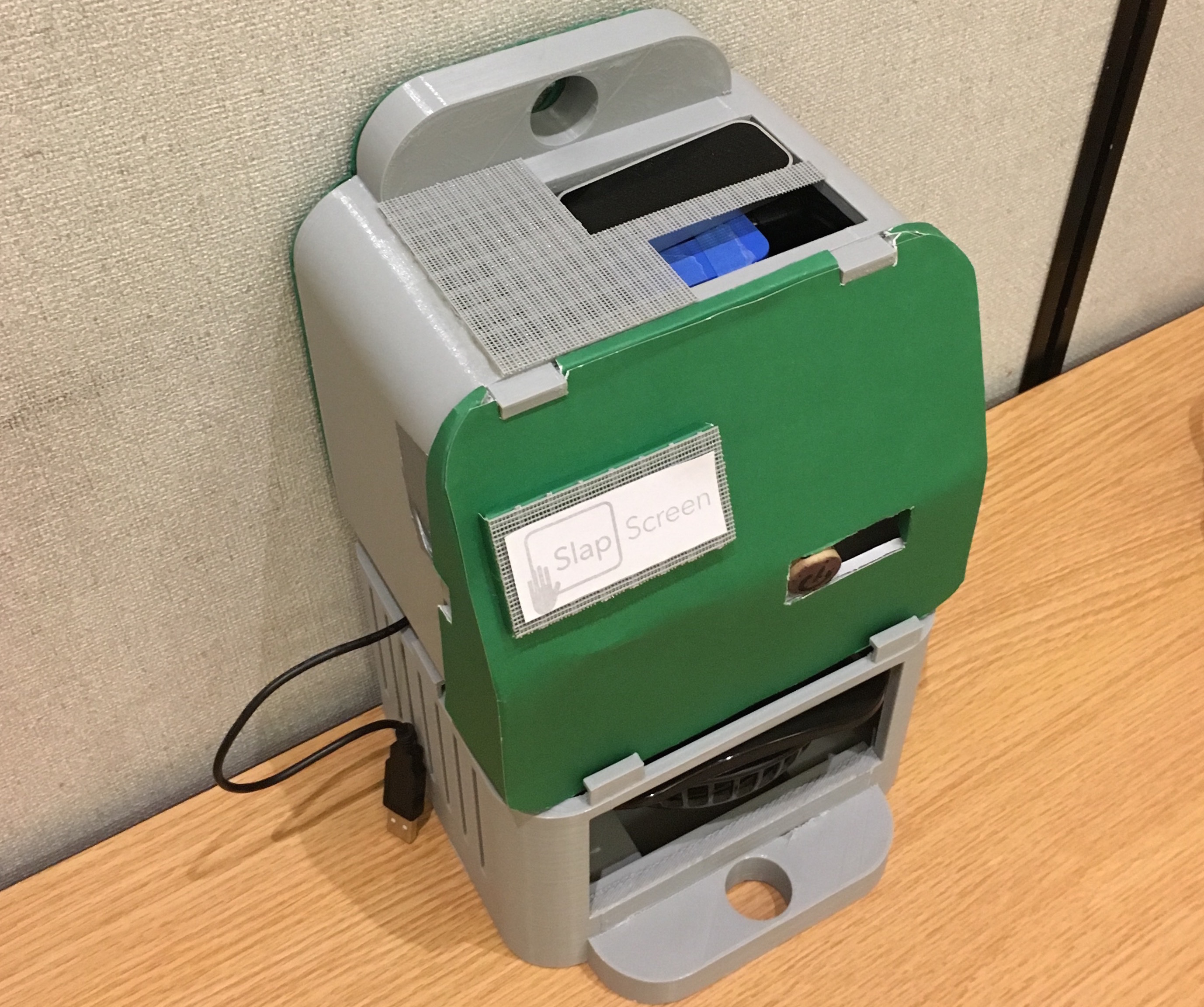

3D Modeling & Casing

A non-ECE factor that’s in our project is the modeling of the case. When designing the case, we focused on trying to house all the components in the least amount of space and height that we could, while keeping it accessible for modifications and having a good amount of ventilation